Trust Networks: How We Actually Know Things

Introduction

How do you know what you know? Perception, intuition, empiricism, logical deduction: these are the kinds of answers philosophers have proposed for millennia. And yet when you reflect carefully on your store of knowledge, you'll discover that very little of it is the direct product of such methods. Instead it relies on trust.

Because trusting the right people is so important in life, we have an excellent intuitive understanding of trust. But when we think about knowledge in the abstract we become strangely stupid, and ignore our dependence on trust like camels ignoring the sand under their feet. We imagine that the transfer of information from person to person is a transparent and frictionless process, even though we don't act this way in practice.

Our ignorance is learned. Trust is based on ad hominem reasoning, which evaluates a claim by judging the person making it instead of the claim itself. Since we've been taught to believe that ad hominem isn't a valid form of argument, and that knowledge is based on valid arguments, we assume knowledge can't be based on ad hominems. But this is a mistake. Judgments of trustworthiness, and therefore ad hominems, are both ubiquitous and indispensable, even to the higher realms of knowledge.

In this essay I'll use a series of examples to show just why trust and ad hominem judgments of trustworthiness are so important. I'll begin with exceedingly simple instances of trust-based knowledge in order to demonstrate the concept clearly, and then gradually build on these to show how our political and even scientific knowledge is likewise grounded in trust. In the second part, I'll show how people group into networks of mutually trusting subjects. These trust networks are shaped by social conflicts that impair our ability to produce knowledge and degrade the accuracy of our beliefs—a problem that's particularly damaging in the present day. In the third part, I'll discuss potential remedies.

By drawing your attention to ad hominems, trust networks, and strategies for managing them, I hope I'll help you to reflect on your own beliefs and improve their accuracy.

Contents

Part I: How We Depend On Trust

A. Let's Cheat At Cards (The Basics of Trust)

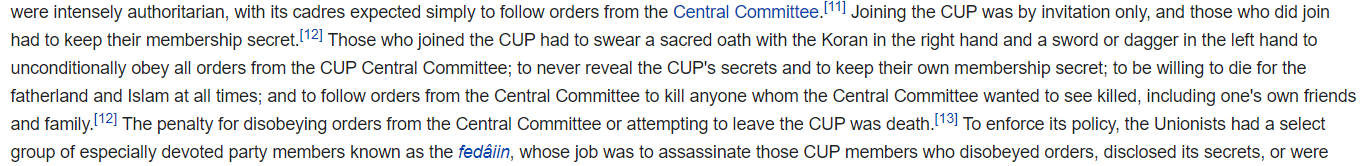

B. Let's Start a War (Political Reporting)

C. Let's Oppress The Peasants In The Name Of Science (As If We Needed An Excuse!)

D. Let's Ad Hominem God (Faith, Paranoia, and Conspiracies)

Part II: Trust Networks and Epistemic Load

A. Let's Fire A Scrupulous Researcher (Trust Networks)

B. Let's Fry Our Brains On Social Media (Signaling Load)

C. Let's Kill A Virtuous Man (Partisan Load)

D. Let's Fight A Dirty War (Epistemic Load)

E. Let's Deal Drugs (Hacking Trust Networks)

Part III: Efficient Stupidity

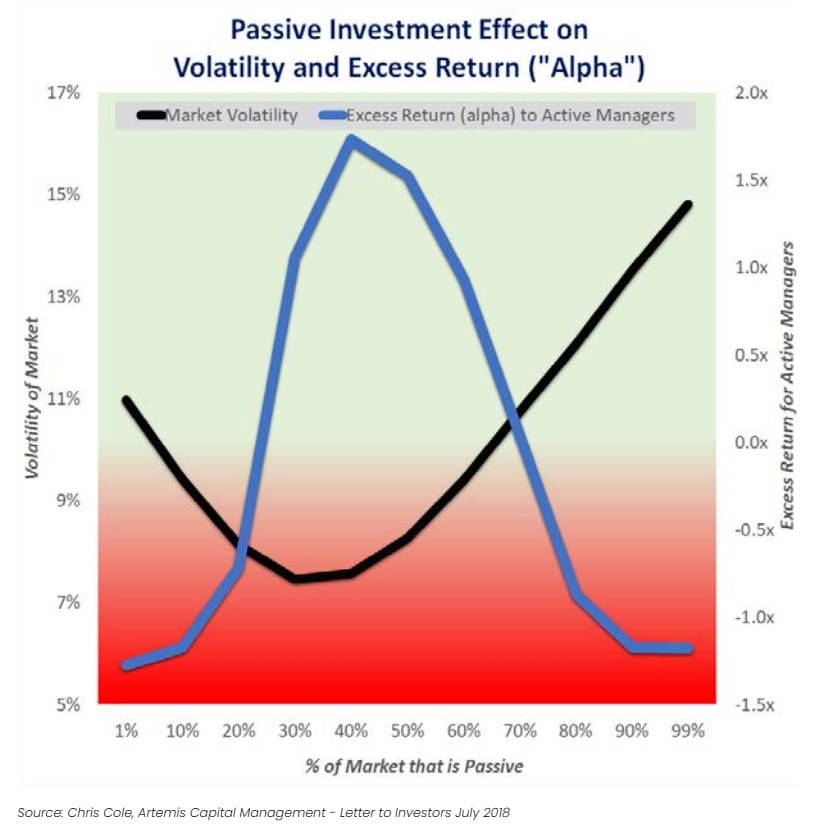

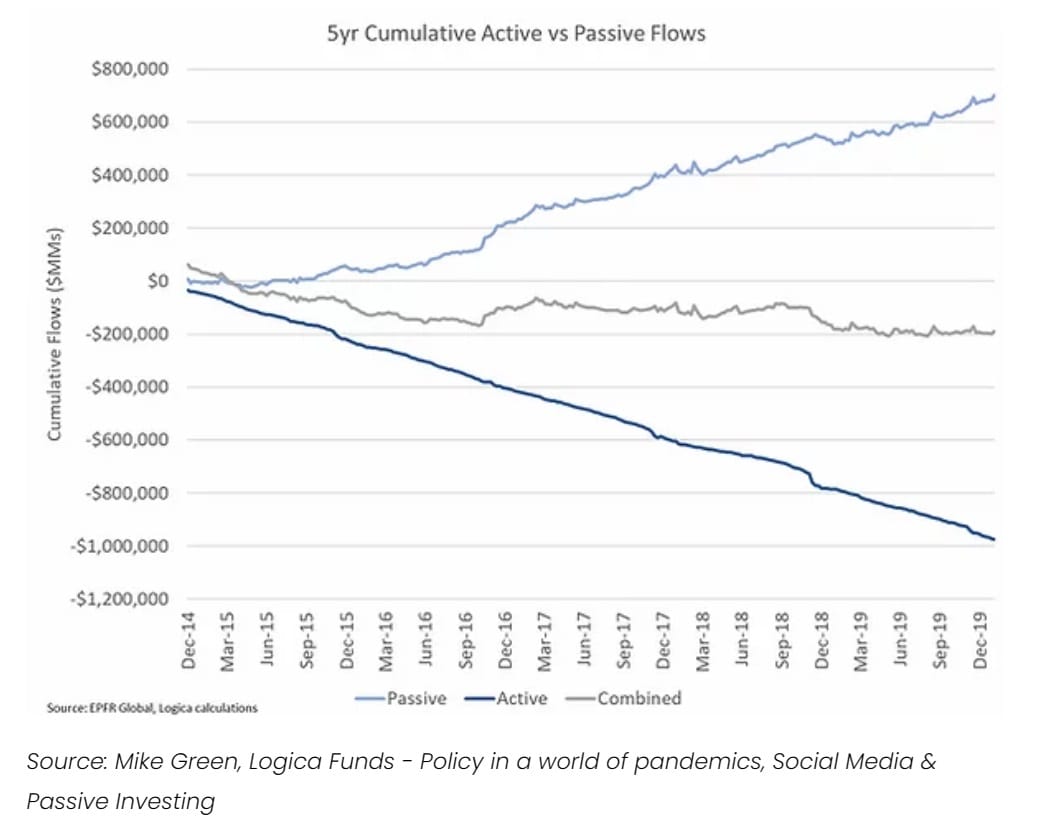

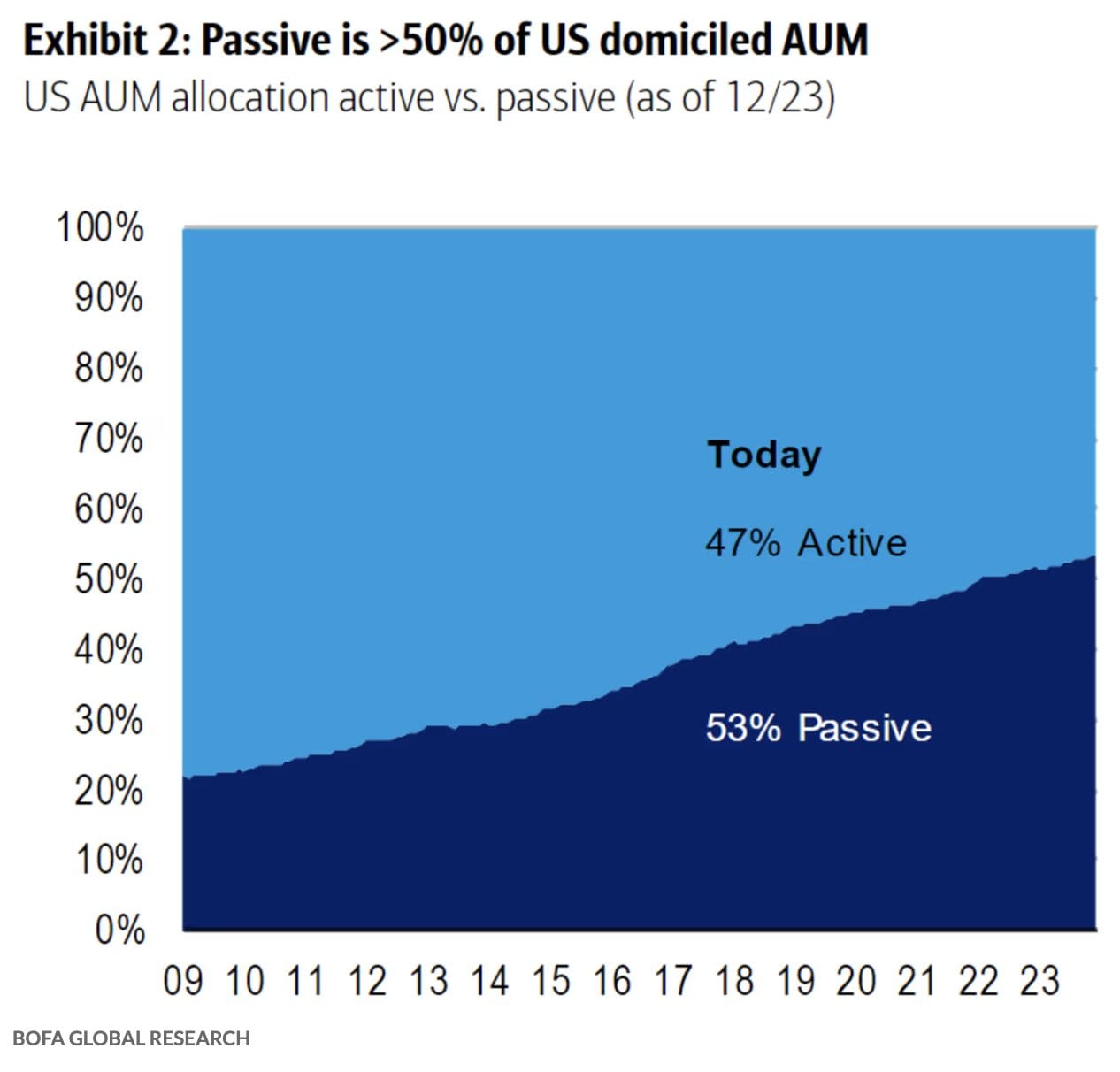

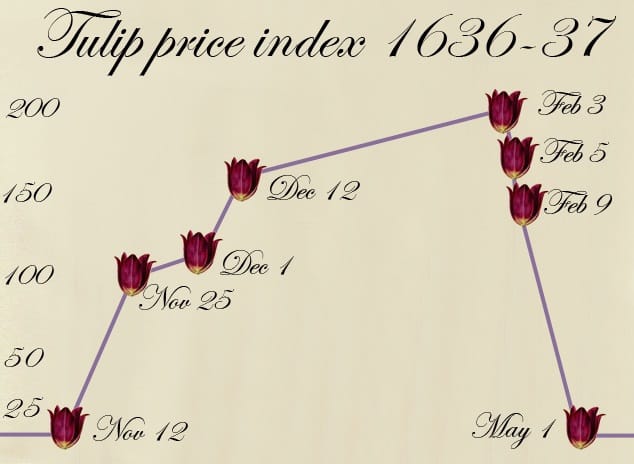

A. Let's Outsource Intelligence (Passive Cognition)

B. Let's Paint It Gray (Trend Following)

C. Let's Start A Stampede (Passive Leverage)

D. Let's Play Opposite Day (Contrarians and Cognitive Altruists)

Part IV: Techniques For Improving Belief Accuracy In A World Of Ad Hominems

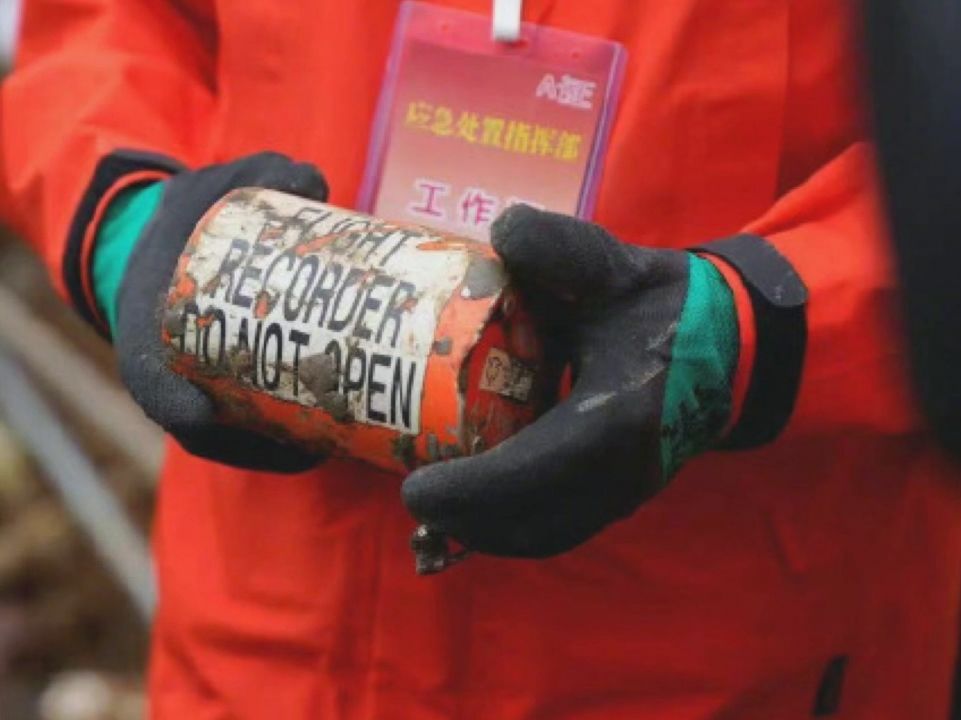

A. Let's Crash A Plane (Black Box Verification)

B. Let's Get Medieval (Social Technologies To Manage Trust)

C. Let's Read Fiction (Techniques For Navigating A World of Ad Hominems)

Part I: How We Depend On Trust

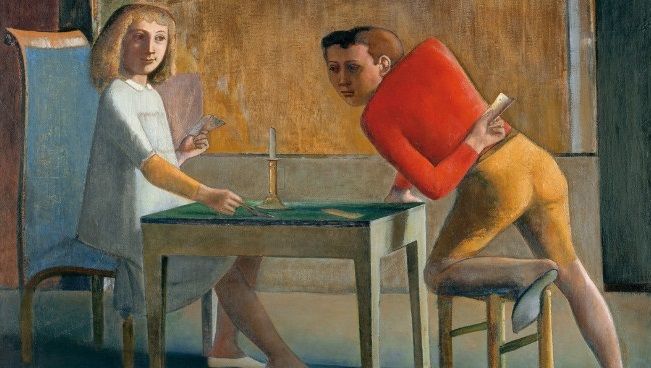

A. Let's Cheat At Cards (The Basics of Trust)

Imagine, dear readers, that you're playing poker. You've a very good hand and you're about to bet the farm on it. Your friend steps behind you and whispers in your ear. He informs you that one of your opponents has a royal flush; you'd better fold now, because otherwise you'll lose everything. Do you trust him? Of course you do. He's your friend! You take his claim as a matter of fact and fold.

Now imagine a very similar scenario. This time it's not your friend but your enemy who claims to have seen your opponent's hand and tells you to fold. He's a jealous bully who's hated you ever since his high school crush spurned him and went to prom with you instead. Do you trust him? Of course you don't. He's your enemy! After deciding he's not clever enough to use reverse psychology, you conclude he must be lying and bet the farm instead of folding.

Imagine the scenario a third time, except in this case it's a mischievous trickster who tells you the same things. He likes playing cruel practical jokes on people. Or again: now the man urging you to fold isn't your enemy per se, but he is the brother of another card player. Do you trust him?

It's obvious that when someone offers you information you can't verify directly, your decision to accept or not accept his information depends on your evaluation of his character. And not only his character, but also his relation to you as a friend or foe, his history of being right or wrong, and even his relation to the other parties affected by your beliefs. In other words, trust-based knowledge depends on ad hominem judgments.

What's perhaps not so obvious is that more sophisticated forms of knowledge depend on trust in much the same way this simple card cheating arrangement does.

B. Let's Start a War (Political Reporting)

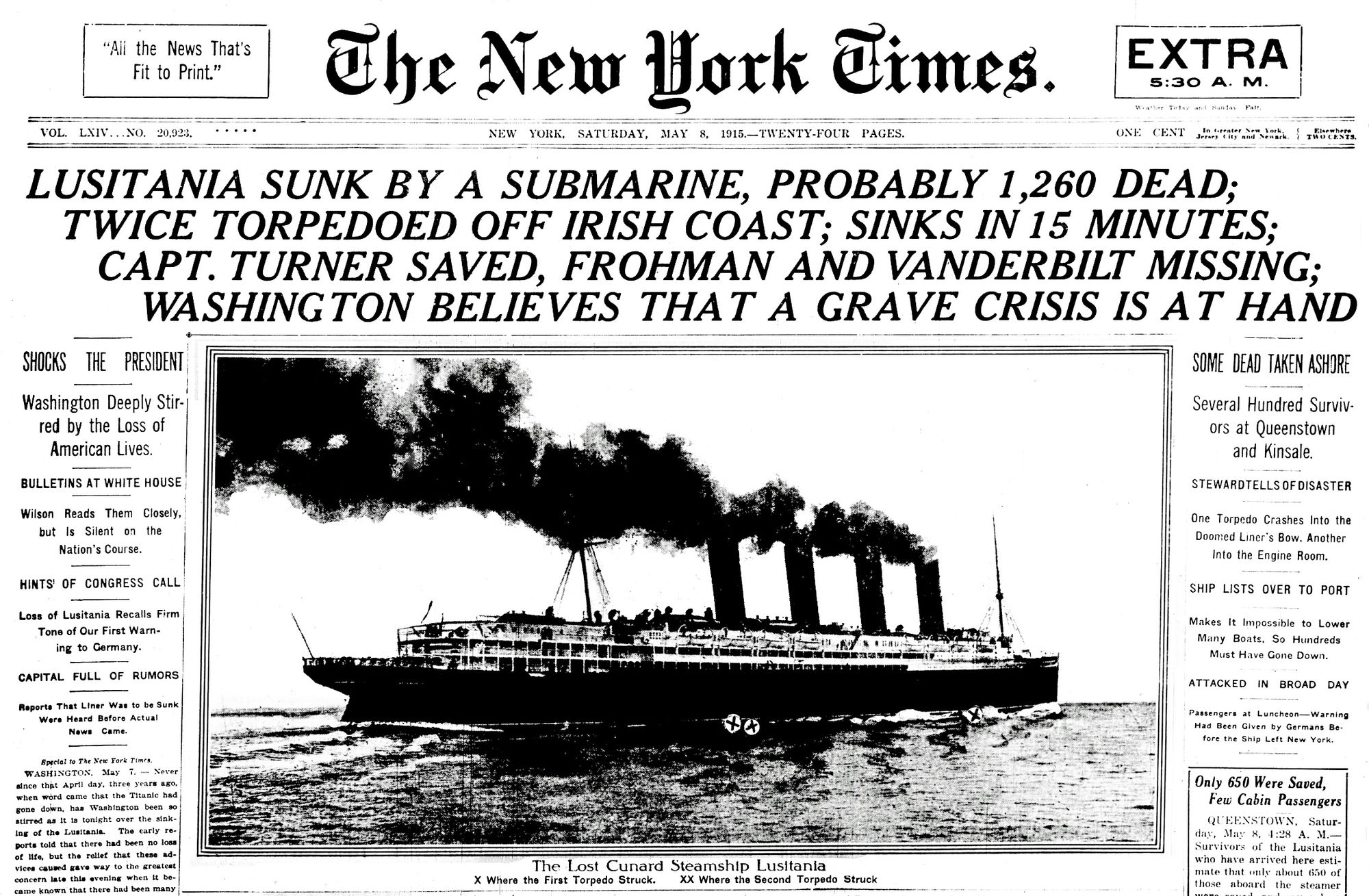

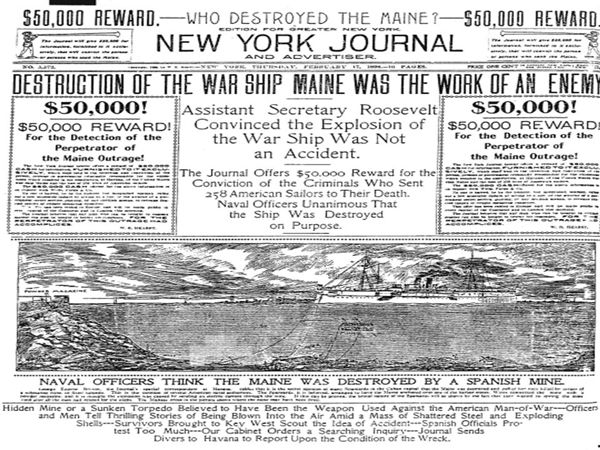

Imagine, dear readers, that you open up the New York Times one morning and see a shocking headline. A foreign country—let's call it Atlantis—has attacked one of your ships unprovoked, blown it up, and killed hundreds of civilians. It's an act of war.

Well, probably. The government of Atlantis denies any involvement. Few people were on the scene. You have no way to personally confirm any of the facts. The most damning claims against Atlantis come from confidential sources in national security. Nevertheless, the journalist writes as if the reported version of events is confirmed all over the place and definitely true. Indeed, he seems just as confident as if he'd died on the ship himself!

So: do you support a declaration of war? The question isn't as easy as it might seem. Let's think about how you'd answer it.

Which of these casus belli turned out to be true? Even though millions of lives were at stake, it wasn't always possible to be sure on the day they were reported.

You would like, of course, to confirm the facts directly. But as I've already stated, there's no way to do that. You're dependent on secondhand reports. And while you can sometimes identify falsehoods by looking for contradictions in hearsay, none are apparent here. It's simply a matter of deciding whether you trust the men making the claims: the journalist, his editors, media owners, your national security administration, etc. To do this you'll consider their previous record of honesty or dishonesty, as well as their motivations and loyalties, just as you did when cheating at cards. And then you'll make the most critical decision of all—the decision whether or not to go to war—on the basis of an ad hominem judgment of trustworthiness.

Of course, however important they might be, nobody believes our political decisions can always be based on absolute knowledge. So you're likely thinking, “Trust may be relevant in politics; but it's not relevant to the production of real knowledge like physics or mathematics.”

But that isn't true. Even hard science depends on trust, as I'll demonstrate next.

C. Let's Oppress The Peasants In The Name Of Science (As If We Needed An Excuse!)

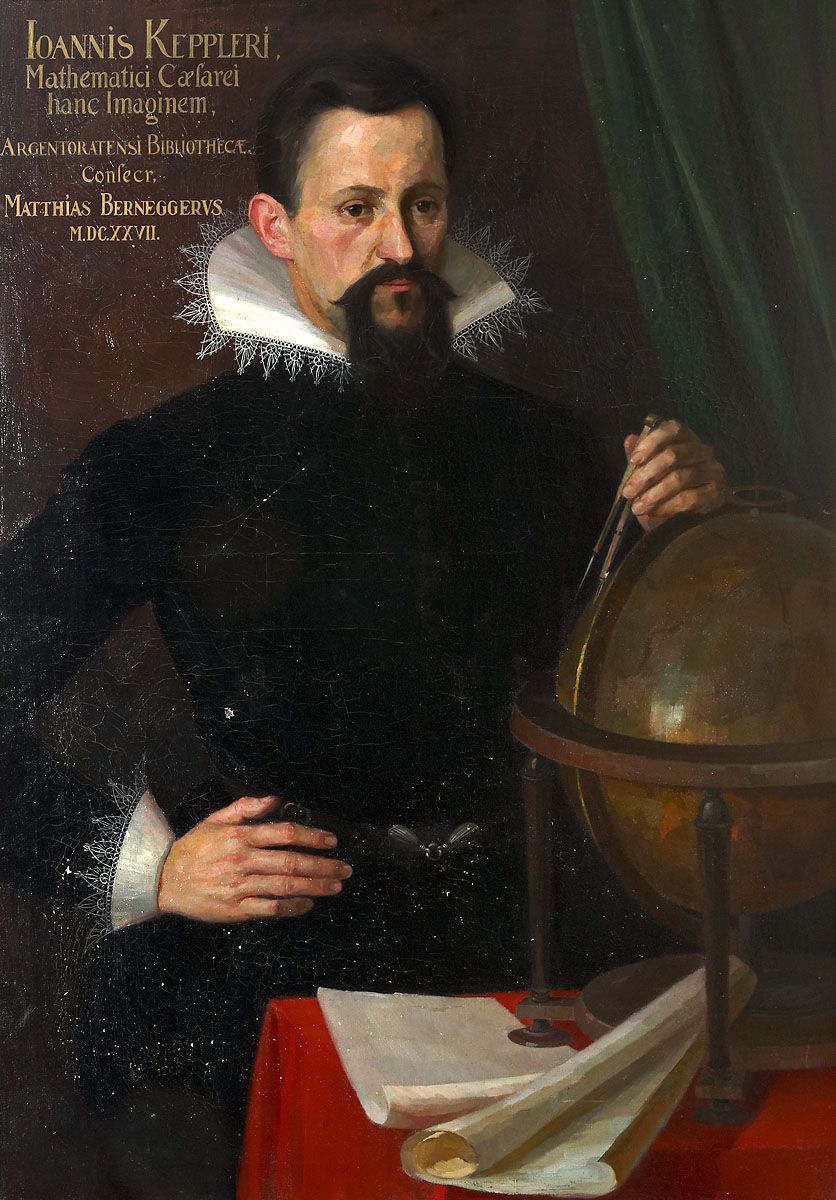

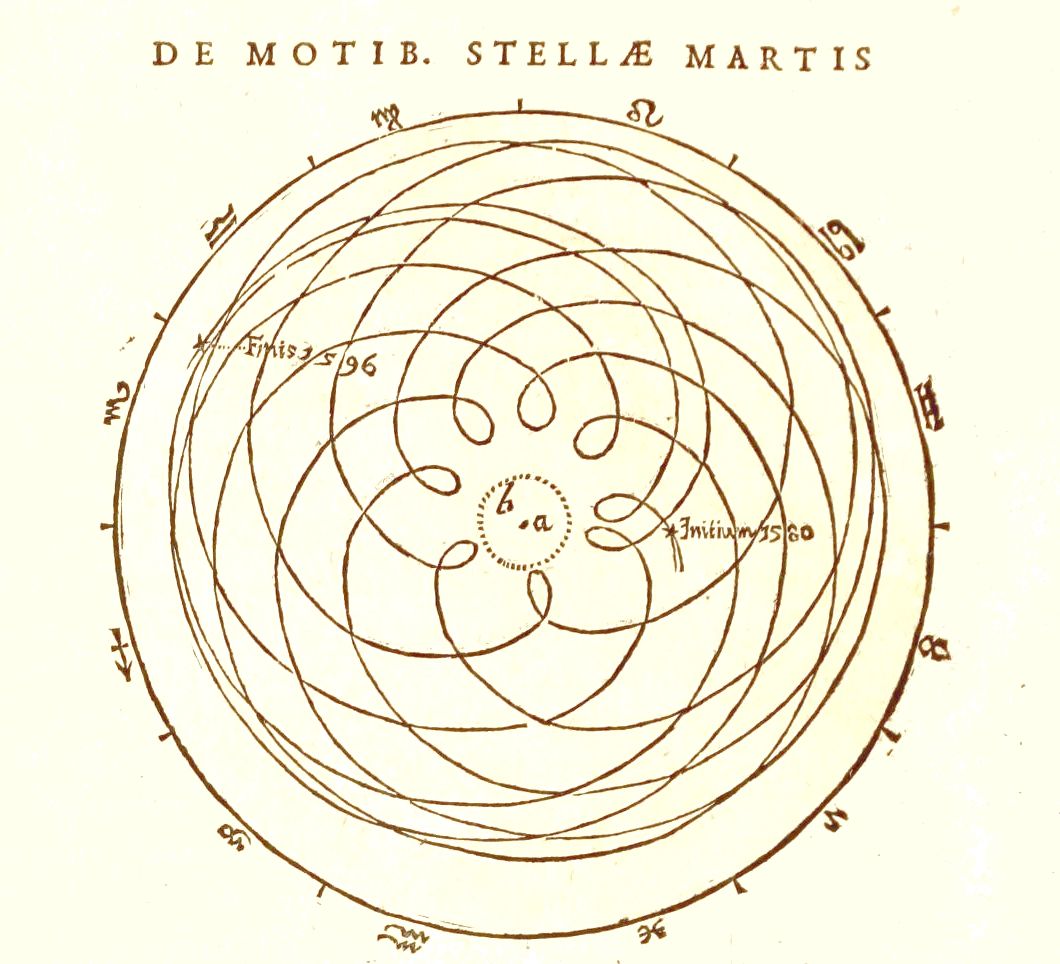

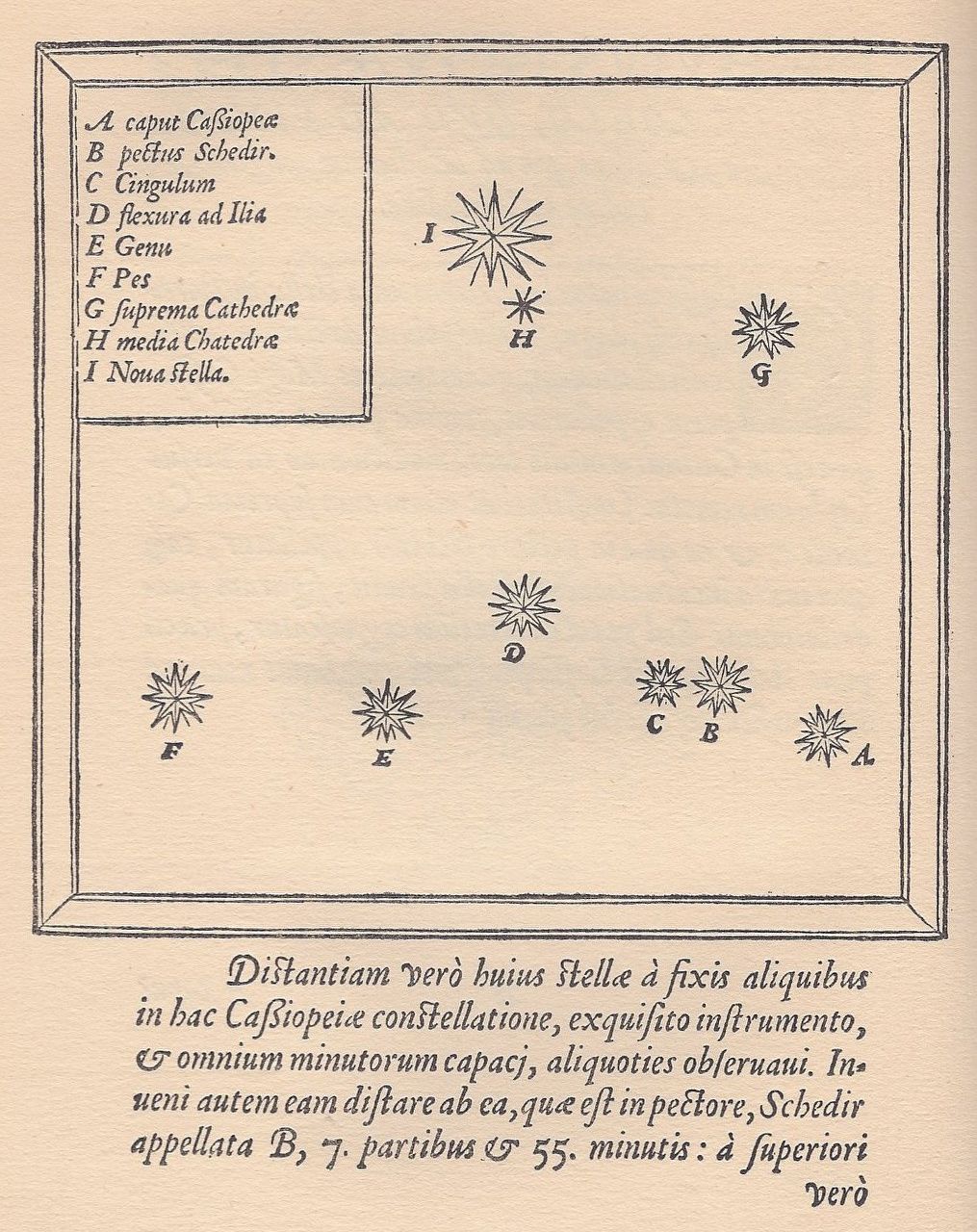

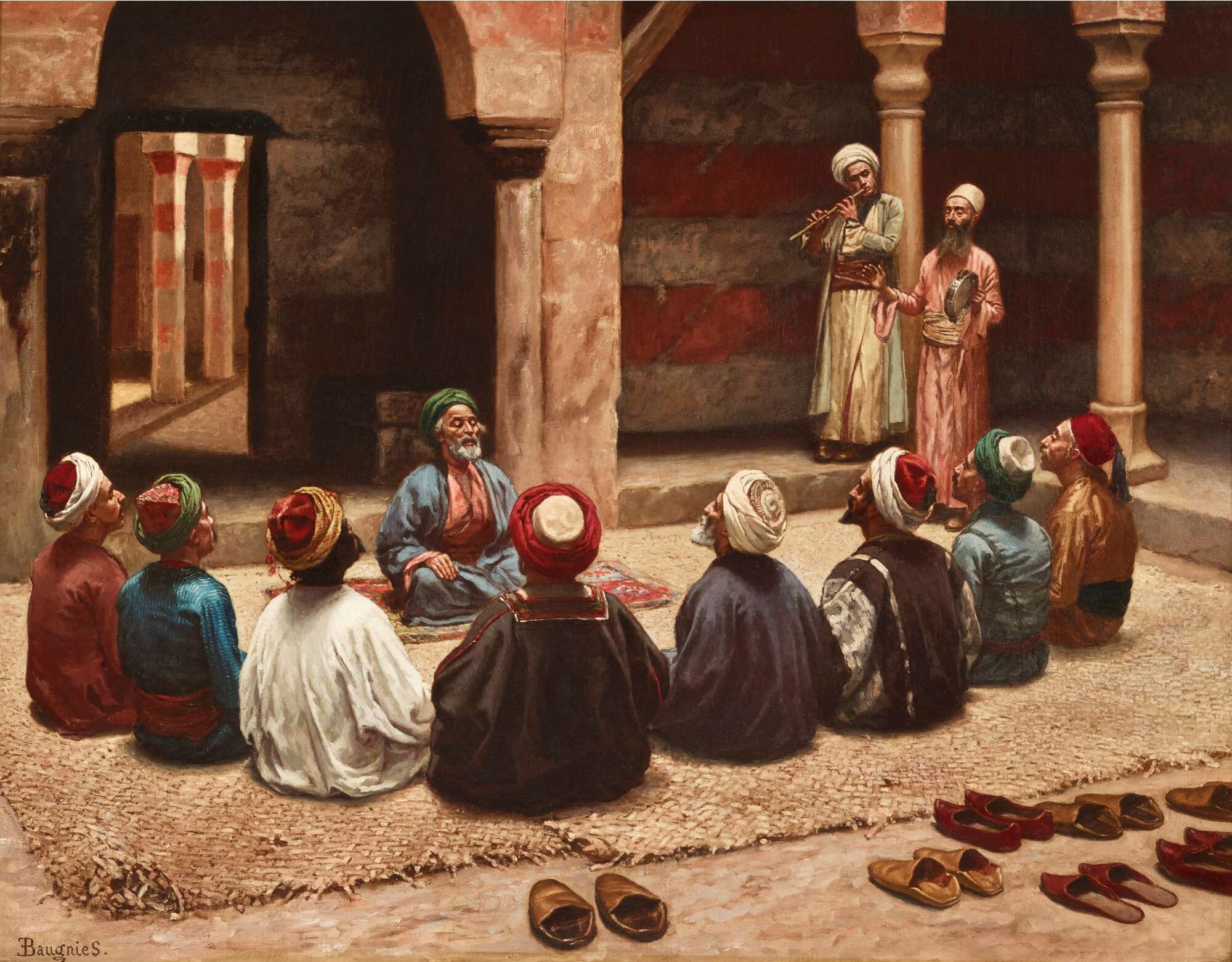

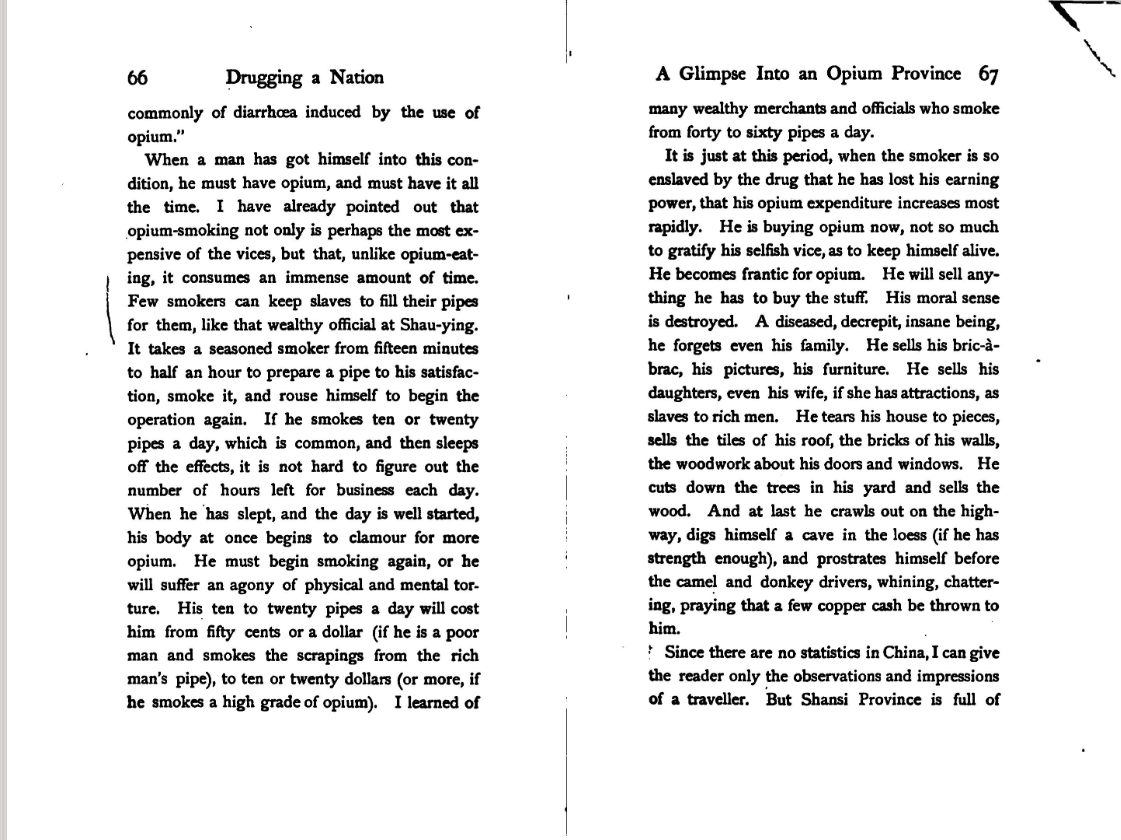

Tycho and Johannes.

Imagine, dear readers, a pair of scientists. Tycho is a wealthy noble who dabbles in astrology in his spare time. Johannes is a theorist with a great mind for geometrical models.

One day a private island happens to fall into Tycho's hands. He does what anyone would do with a private island: forces his peasants to build a state-of-the-art facility for recording astrological observations. (Let's face it, if he didn't crack the whip they'd just be swilling cheap beer and reenacting the least wholesome scenes from Chaucer.) Tycho then presents his observations to Johannes, who will set to work searching them for patterns.

Johannes' theories can only be as good as the observations that form their basis. So Johannes needs to confirm the observations provided by Tycho are indeed correct; if they're not, trying to fit them to geometrical models will be a waste of time. This isn't just a matter of evaluating Tycho's methods. It's also a question of trust. Is Tycho reliable, or lazy, erratic, and sloppy? Is he a friend, or an enemy who might knowingly share false information? Would he fabricate data to advance his career? Is he the partly unwilling victim of an astronomical blackmail ring? Johannes' scientific research depends on the answers to these distinctly unscientific questions, because they all have bearing on the reliability of Tycho's data.

This example drawn from the heart of physics is analogous to the card cheats we discussed earlier. One party transfers information to another party who can't verify it directly. The recipient is therefore forced to substitute an ad hominem judgment for direct verification.

Such substitution is omnipresent and indeed indispensable to the progress of science. Without it Johannes would have to repeat the observations himself. Even assuming he could arrange to do this (impossible in practice, since like far too many of us, he couldn't afford a private island), the efficiency cost would eventually halt progress. Indeed, if there's no trust, then every single person—to know anything at all—has to personally perform every experiment and verify every piece of information that feeds into the final conclusion. There's simply not enough time to do that.

As a matter of historical fact, Tycho seems to have been as trustworthy and exacting as could be hoped. But because his assistants were sloppy, his astronomical tables contain random errors. Johannes would have therefore been wise to keep the question of trustworthiness in the back of his mind even during his theoretical research, lest he throw out a good theory before considering that some anomalous data points might have been the product of human failings.

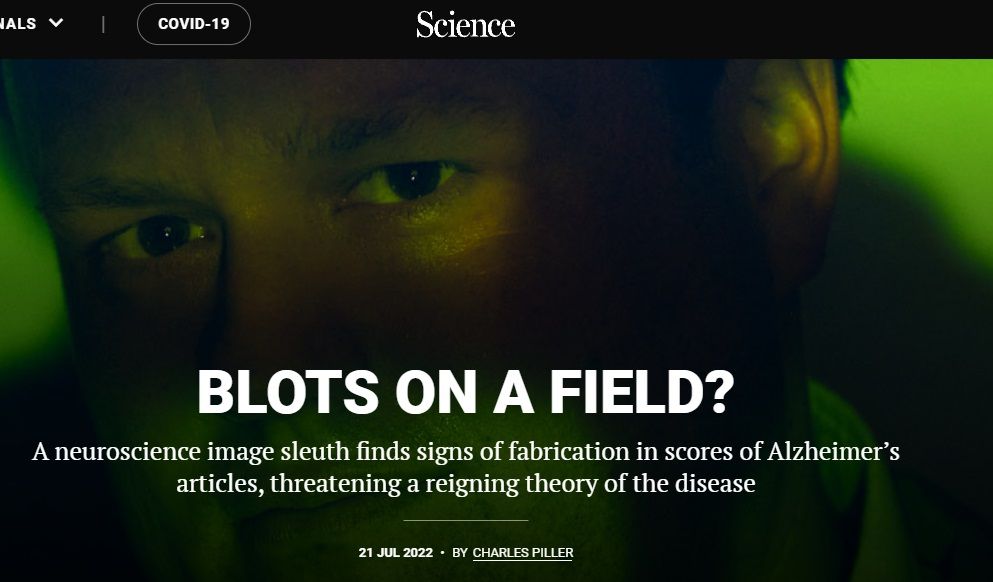

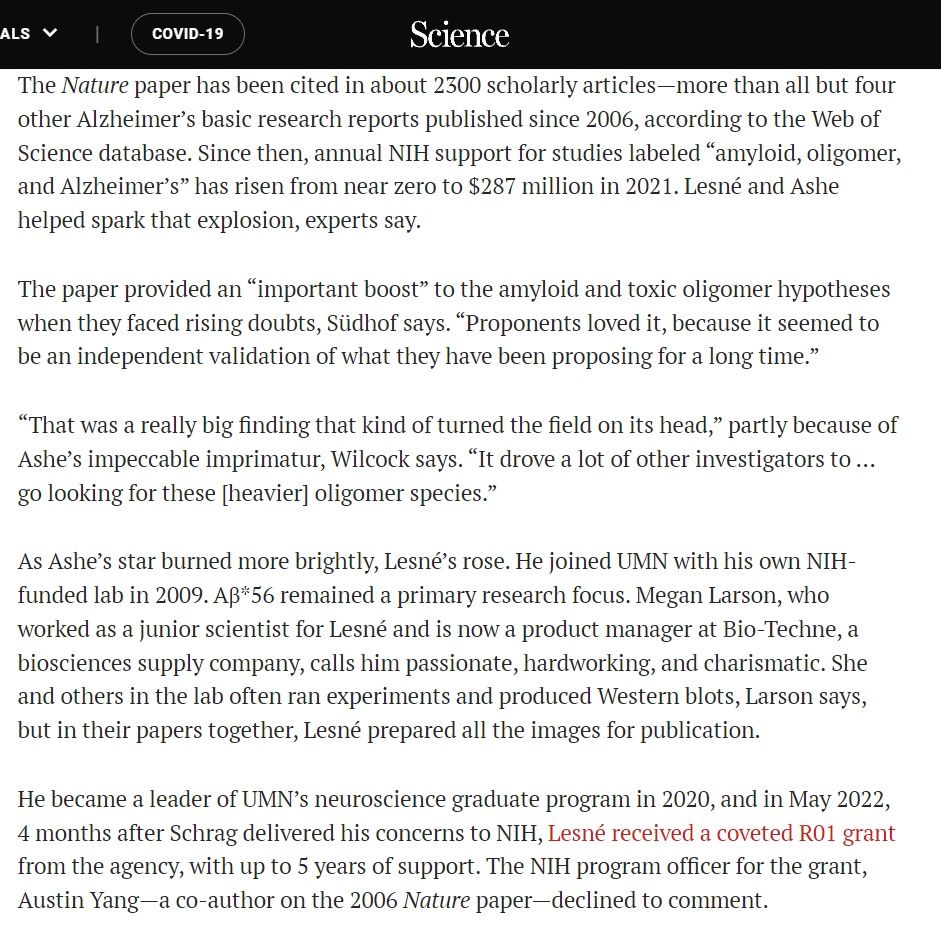

This scenario isn't unique to a particular early moment in the history of physics, nor is it a mere irrelevant technicality in the knowledge production process that you can brush aside, as you might feel tempted to do. Untrustworthy research is a sad reality, and not only in the soft sciences. For instance, Alzheimer's research was set back substantially by a fraudulent but highly cited paper that scientists in the field mistakenly trusted. Alzheimer's is responsible for over 100,000 deaths per year in the United States alone, so slowing progress toward the cure by as little as a day is equivalent to an act of mass murder. And someone was willing to commit that act of mass murder, dear readers, just for the paltry amount of status and money he'd gain by advancing his scientific career!

Even the hardest of hard sciences, mathematics, is dependent on trust. Because anyone can verify all the propositions in Euclid personally, we're tempted to assume professional mathematicians individually work through every proof from the Pythagorean theorem up to the latest publications, with no need to trust other humans. This is far from the case. Today's advanced mathematics has reached such a degree of complexity that only a tiny number of people possess both the brainpower and the time to verify new theories, let alone to check each step from the beginning of mathematical history. So although we conceive of mathematics as an accumulating sequence of absolutely certain intuitions, in practice this sequence can't be completed within any individual human mind. Instead, big gaps between intuitions are linked together with nothing more solid than trust in other mathematicians who claim to have checked them. In other words: ad hominem judgment.

A salient recent example is Shinichi Mochizuki's Inter-Universal Teichmuller Theory. Mochizuki claims his theory solves important mathematical problems, but its length and complexity are such that hardly anyone can confirm his claim. The tentative consensus among professional mathematicians seems to be that Mochizuki's proof is flawed, but it's hard to say what “consensus” could even mean in a context where only a handful of people can examine it with the necessary thoroughness.

Mochizuki, for his part, has gathered local supporters around him and published the Inter-Universal Teichmuller Theory in a journal he edits. The scenario resembles a gang turf war more than a shining ornament atop the ivory tower of knowledge, because even the large majority of seasoned professional mathematicians can do no better than guess at the theory's validity by deciding which little posse of better-informed mathematicians they consider more trustworthy. And even if a genuine consensus eventually does emerge, the vast majority of mathematicians will still decide the theory's validity on the basis of trust in other mathematicians, not direct knowledge.

So for the sciences, however hard or soft they might be, the traditionally recognized means of knowing—perception, intuition, etc.—are only the initial stages of knowledge production. Trust is an ineliminable corollary of the division of labor that permits their advanced development.

To summarize the argument:

2. Collaboration entails information transfer from one party to another.

3. When information is transferred the receiving party substitutes an evaluation of the providing party's trustworthiness for direct verification of the information.

4. Advanced knowledge production therefore depends on trust.

D. Let's Ad Hominem God (Faith, Paranoia, and Conspiracies)

We've established that trust grounds our knowledge in card games, politics, science, and even mathematics. But what if I told you that trust actually grounds all our knowledge of anything whatsoever? Descartes came within a small step of making such a claim—and I'm going to take that small step for him.

Descartes speculated that there might be an evil demon distorting all of our perceptions and even our most basic cognition, so that nothing we perceive or think is real. The “cogito ergo sum” that follows this speculation is famous, of course. But few people today bother to read past the cogito to learn just why Descartes ultimately decided he could trust his thoughts and perceptions, and trust the world to be real rather than an illusion created by an evil demon. It was because he deduced—by means of the ontological proof—that God exists and cannot be an evil demon.

But I, dear reader, would rather pull that ontological proof out from under your feet like a magic carpet. Because if one doesn't accept the ontological proof—and I doubt you do—then in my new and upgraded version of Descartes' argument one can only take God's existence, goodness, and even the reality of the world itself on faith.

This faith that the Creator of our world is good is nothing but the ultimate ad hominem judgment: an extreme form of trust to underpin all of existence. And the paranoia motivating the fear that this Creator might instead be an evil deceiver—that too is nothing but an ad hominem judgment: an extreme form of distrust calling all of existence into question.

God and the evil demon in my version of Descartes' thought-experiment represent the two poles of what I call the faith-paranoia axis. This axis is fundamental to human cognition. How do you know, for instance, that your friends aren't secretly conspiring to murder you? Well, you don't know absolutely. They might be. And sometimes the mad begin to think along just these lines.

You're probably inclined to dismiss this type of paranoia as irrational and of no relevance to a sane person like yourself. But that's not the case, because even if it's “mad,” it contains no logical error. When you look at someone's face, listen to their tone of voice, and examine their diction, it isn't reason, but rather intuition—yes, the kind of intuition we use in ad hominem judgment—that informs you whether they're telling the truth or lying, whether they're a friend or a foe. And this intuition hasn't been calibrated by reason or scientific experiment, but by experience, and especially by that supra-individual form of assimilated experience known as instinct: your evolutionary inheritance.

Now: are there not people who are too credulous or too distrusting? And how can you be sure you're not among them? What if you began to sense an untrustworthy look in your friend's eyes; what if you began to suspect a cabal of evil scientists fabricated all the data at your disposal—what if, at certain moments, you began to sense the kind of skipping frame rate you'd expect from a glitchy VR set? Reason, in the strict sense, wouldn't offer you an exit.

Some people overcorrect for paranoia and are too dismissive of conspiracies. We have good historical evidence of real conspiracies that most rationalists would dismiss as implausibly fantastical, and there's no reason there can't be any such in the present day.

The truth is that we never have enough information to be entirely sure there's no trick, no illusion, no conspiracy behind the way things appear; we can never exclude all possible grounds for paranoia on the basis of reason alone. The screen you think you're reading right now, the ground beneath your feet, even your memories might be illusory. You might in a basement dungeon, trapped inside a full-body VR suit by means of which an evil content moderator is manipulating your experiences—Plato's cave and Descartes' evil demon in modern costumes. (Apologies if I offend any of my dear readers by pointing this out, but the simulation hypothesis, so dear to Silicon Valley intellectuals, is a rehash of a thought-experiment already known to philosophy for hundreds and indeed thousands of years. I have no idea why “Plato's cave, but with computers!” so easily impresses intelligent people.)

Thus ad hominem judgments of trustworthiness, understood broadly, don't just ground politics and science and cards; they're at the very core of our ability to know anything at all. Our minds are tuned for a certain balance of faith and paranoia, a particular position on the faith-paranoia axis of cognition, that enables us to make good decisions about when to trust and when to doubt. Those who are mentally ill tilt away from the optimum, have too much faith or too much paranoia, and make, by consequence, poor choices. Or at least choices that seem poor to us. For functioning effectively in the world we inhabit may require trusting certain illusions, and perhaps it's precisely by seeing through to the true reality that the insane become dysfunctional.

But you're not dysfunctional, are you, dear readers? In fact, you're ready continue to the second part of this essay. It's just below.

Part II: Trust Networks and Epistemic Load

A. Let's Fire A Scrupulous Researcher (Trust Networks)

Thus far we've established that most of our knowledge depends on trust, and also that we tend to trust friends and distrust enemies. Friendship is typically mutual, so in practice groups of friends trust each other and distrust groups of enemies. The consequence of this is that when it comes to divisive issues—even those normally considered in the purview of science—humans cluster into distinct networks with shared beliefs. I call these trust networks.

We can formally define a trust network as a group of subjects who assume without direct verification that each others' claims are more likely to be true than the claims of non-members. The magnitude of this trust differential varies according to the circumstances.

In the examples that follow I'm going to emphasize the harms done by dysfunctional trust networks. This is because when trust networks are working well and arrive at the truth, we don't really need to understand how they work—just as we don't need to understand how our car works until the day it stops working. But when they fail, understanding becomes useful to facilitate repair. Thus it's first and foremost to the cases and causes of failure that we ought to direct our attention.

Nevertheless, despite the discouragingly realistic scenarios you're about to read, I don't believe, nor am I arguing, that trust networks are always harmful and dysfunctional, that all science is corrupt, that everything is relative, that social factors completely overwhelm our ability to discover the truth, nor even that dissidents are necessarily more trustworthy than venerable institutions. I'll offer a more positive vision in Part IV of this essay, so please be patient.

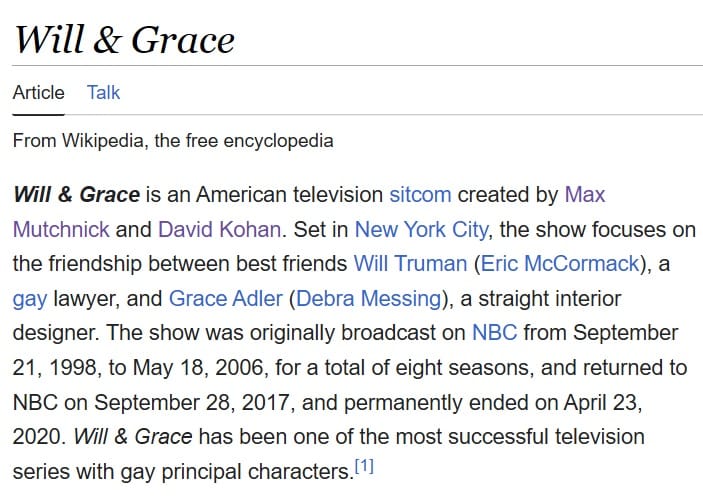

I'd like you to imagine, dear readers, a social scientist named Tom. Tom is a supporter of a political party we'll call the Yellow Party. In fact, it's hard to finish a conversation with Tom before hearing him bash the opposition, the Purple Party, at least once. Tom's latest published and peer-reviewed social science research confirms that the Yellow Party has the best platform ever, almost guaranteed to solve the most pressing social problems, and that the Purple Party has the worst platform in history, or nearly so. There's a second social scientist named Dick. His field of research is a bit different from Tom's, but he also supports the Yellow Party. And Dick's latest published research also confirms that the Yellow Party has the best platform ever, and that the Purple Party has the worst in history, or nearly so.

Now, the default human behavior is for Tom and Dick to evaluate each other on an ad hominem basis, recognize each other as allies, and then by consequence trust each other's research with less than deeply skeptical inspection. And that's exactly what they do. Of course certain i's have to be dotted and t's crossed, and the basic structure of the research has to resemble good science just as a card cheat's account of your opponent's hand has to include the correct number of cards if you're to take him seriously. But that's a simple enough matter, and both Tom and Dick are highly skilled at making their work look professional. So skilled, in fact, that they can make it look professional whether or not it's strictly true. Soon Tom is telling his students how great Dick's research is, and Dick is telling his students how great Tom's research is. When it comes time to select graduate students, Tom is disinclined to accept applicants who doubt Dick, and Dick is disinclined to accept applicants who doubt Tom. Well, unless they're really pretty.

There's also a third social scientist named Harry. Harry is scrupulously neutral and sets a very high standard for his research. He does everything he can to exclude bias and neither hacks nor even slashes his p's. He's pretty much oblivious to both parties. And when it comes time to pick graduate students or select new professors, Harry judges them purely on the basis of ability.

Harry, unfortunately, is about to win the academic version of a Darwin award. He, Tom, and Dick are all on the selection committee for new professors. When they have equal qualifications, Harry is just as likely to select a supporter of the Yellow Party as he is a supporter of the Purple Party. But Tom and Dick are inclined to favor supporters of the Yellow Party, all things being equal, and sometimes even when all things aren't equal. They don't actually feel biased. They just know their side is closer to the truth and, well, more trustworthy. We can distinguish this preference for friendly personnel from the extra trust we put in “friendly” beliefs, but because they're rooted in the same ad hominem judgment they go hand in hand, and ultimately reinforce one another.

In the long run it doesn't matter how many Harrys there are, because a positive bias added to any number of neutral votes will always generate a positive total bias. As it happens, Purple Party supporters are less likely to enter this field than Yellow Party supporters, so Tom and Dick start with a numerical advantage by default. Since the biased are more likely to hire the biased, the total bias will increase with each iteration of the hiring process until Yellow Party supporters dominate the institution.

As Yellow Party supporters become a majority and then a supermajority, scrupulously neutral Harry will begin to look very purple compared to the average professor. He's drifting away from the scientific consensus—or rather new personnel are drifting the scientific consensus away from him. If he hangs around long enough and dissents too loudly from the rising orthodoxy, he might even be ostracized as a Purple Party sympathizer and pressured out of his job.

The final result is that Tom and Dick build an institutionally validated network of mutually trusting allies with a shared picture of the world, which they'll call, for lack of a better term, “established science,” whence information supporting Purple Party positions will be thoroughly and effectively suppressed.

Tom and Dick's dominance in academia will grant them the ability to provide their friends with training, salaries, and credentials, while denying the same to their enemies. Since training amounts to a genuine advantage and bureaucratic hiring and promotion processes typically give more weight to credentials than results, the Yellow Party may eventually come to dominate official bureaucracies as well, and these bureaucracies may return the favor by rewarding Tom, Dick, and their friends with more research grants. In fact, the whole process could have been set in motion the other way around, with partisan grantors shifting the initial balance in academia by funding a greater number of friendly grantees. Either way it creates a self-reinforcing trust network of captured institutions.

However, Tom and Dick's enemies—the supporters of the Purple Party—are not impressed. Their default behavior is to distrust any supporters of the Yellow Party, and most especially to distrust anyone whose research claims the Yellow Party has the best political platform in history and the Purple Party the worst. They'll therefore tend to separate themselves from supporters of the Yellow Party and form a distinct trust network with its own mutually reinforcing allies and its own shared picture of the world. Naturally they won't dominate the same institutions. And when they do try to engage in social science and the like without institutional support, they'll typically be amateurish and sloppy, since they've been denied access to the training and funding necessary to achieve professional quality. (This will in actual fact decrease their trustworthiness, but as a consequence of their exclusion, not necessarily of the values, interests, and capabilities the Yellow Party will blame for their incompetence.) Eventually institutions will be viewed as part of either the Purple Party's trust network or part of the Yellow Party's trust network, depending on which faction dominates.

All this derives from simple game theory, without the need for unusual political conditions nor any formal conspiracy whatsoever—but just a predominance of like minds who instinctively filter new entrants for more like minds in an environment where performance and conformity to reality are selected for only weakly, or not at all.

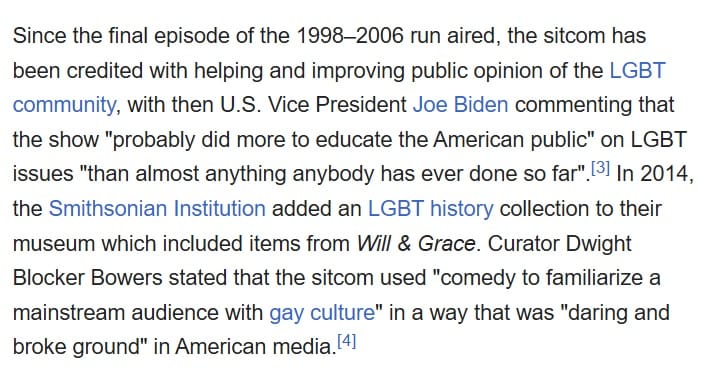

In saying this I don't intend to imply that a conspiracy to precipitate the same result wouldn't be feasible, but only that it isn't strictly necessary. In fact, clever conspirators could achieve great things with little effort by leveraging the game theory just analyzed as a fulcrum to move the world. Once their partisans were installed, the conquest would be largely self-sustaining, or at least sustaining long enough for the ant to climb to the top of the blade of grass, and the mutual reinforcement we've just described could give them unbreakable control of several key institutions at once.

“Emergence or conspiracy” is a false binary, because a clever conspiracy will elicit, guide, and exploit emergent behavior to serve its own ends with minimal force rather than attempting in vain to independently control each element. If you naively overlook or dismiss the possibility of predators, sheepdogs, and shepherds, you can't understand the behavior of a herd.

As for members of the lay public, they have even less time to thoroughly scrutinize Dick's work than Tom does, so how much they trust his research is in large part a function of whether he falls within their own trust network. Here it's important to clarify, however, that trust networks aren't only nor even necessarily a matter of political alignment (nor should you imagine that “centrists” are disconnected from these networks—to the contrary). The game theory I've just described can play out with any kind of faction, any number of trust networks can coexist, interconnect, and overlap, and there are other intra-network factors that influence trust as well. The boundaries between one network and another are not strict; they resemble rather a colony of spiderwebs crossing over and under each other. If you did set out to map them, you'd find that we all participate in many. For instance, your extended family could be considered a trust network even though the members may differ in political alignment; likewise for professional associations. I've chosen to illustrate here with a pair of fictional political parties because it's simple, easy, and familiar.

When social conflict is minimal and selection for genuine competence is incentivized, the effects described above will be modest and the divergence of trust networks will remain incomplete. This is because the requirement for competence puts a brake on selection for partisans and impractical ideologies. But in times and places where social conflict is heightened and selection for competence is not incentivized (or worse still, where partisanship per se is incentivized, as in journalism today), the trust networks will separate faster and diverge more substantially, because the friend-enemy distinction will be weighted more heavily—to the point where it overwhelms competence and character. As the famous saying goes, “He may be a psychopath, but he's our psychopath.”

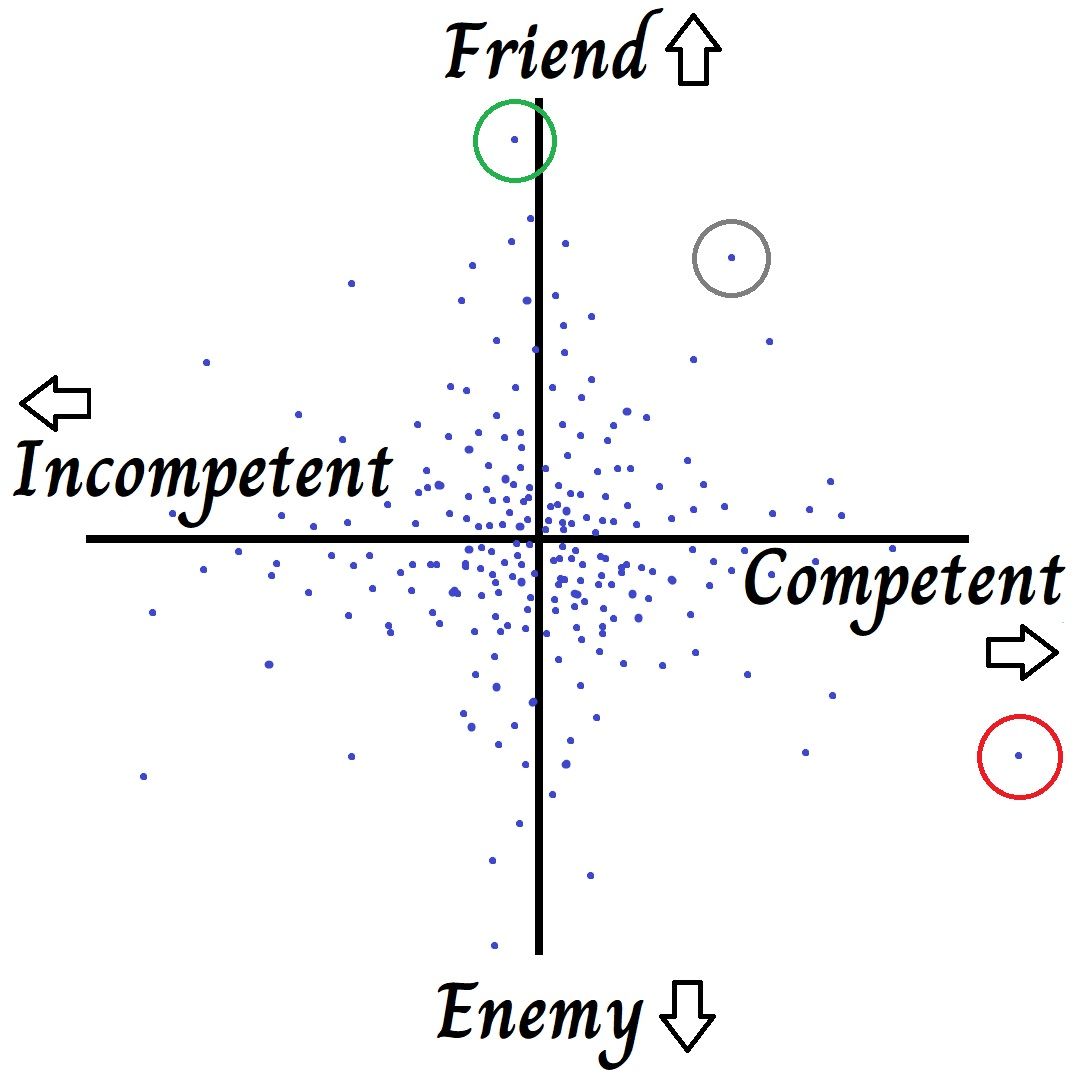

The basic mathematical model below illustrates this point.

So, I've explained the concept of trust networks and given an overview of how they operate. In the next few sections I'm going to drill down and look more closely at how social forces within and between trust networks degrade the accuracy of collectively held beliefs. I call the action of these forces on belief epistemic load, and I'm going to separately analyze two salient components: signaling load and partisan load, both of which hinge on ad hominem judgments.

To tackle the first I'll begin with an abbreviated discussion of social signaling itself, already a familiar concept to my dear readers, and then use a few colorful examples to demonstrate how it degrades knowledge.

B. Let's Fry Our Brains On Social Media (Signaling Load)

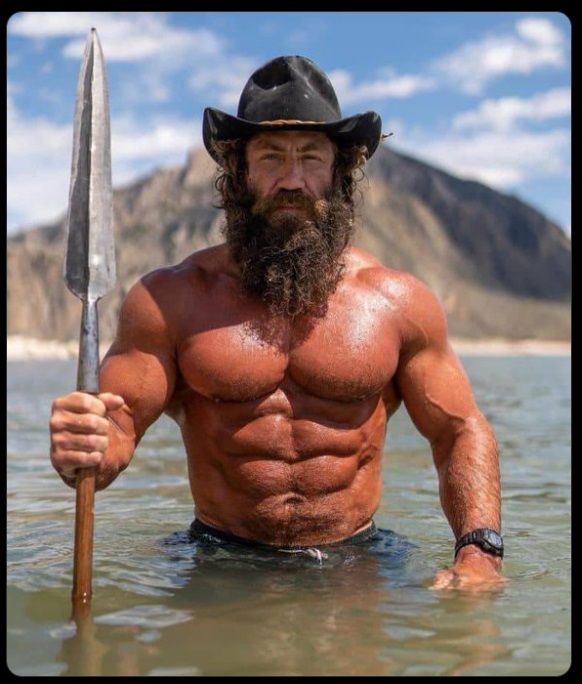

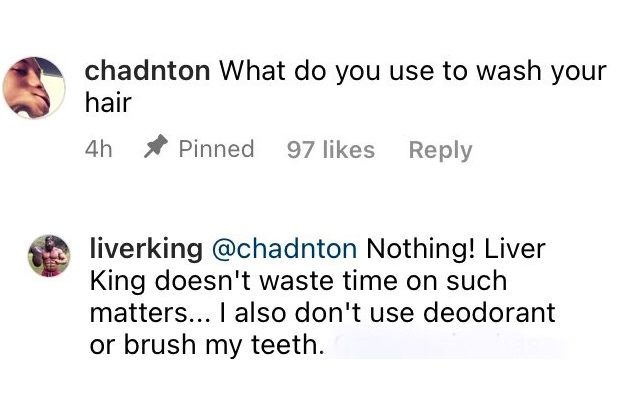

Influencers.

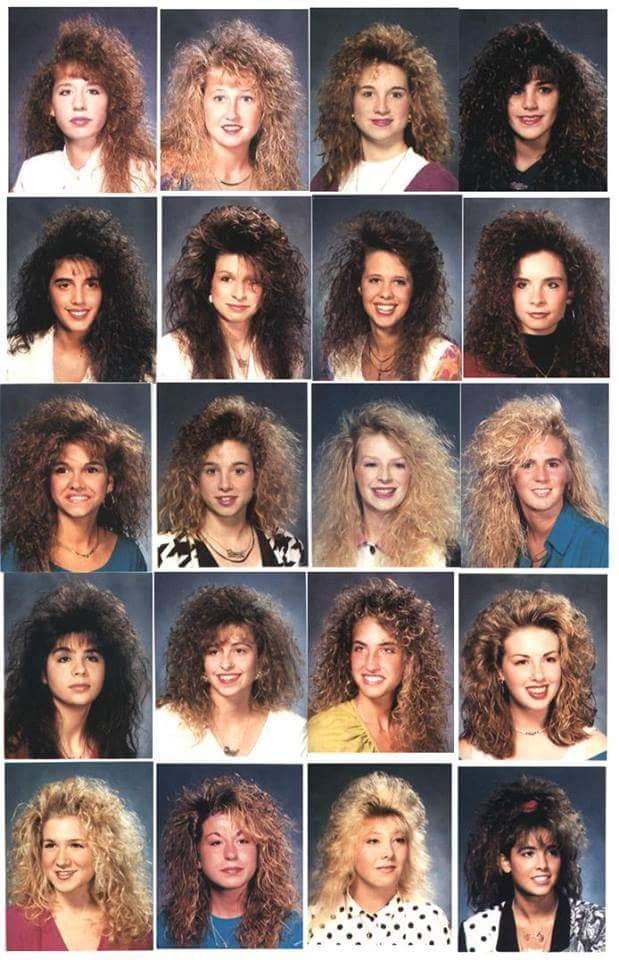

We signal our identity, our positive character traits, and our membership in social groups and sub-groups by diverse means, which include clothing, hairstyles, aesthetic tastes, car models, bumper stickers, speech habits, customs, rituals, and even beliefs—professed or real, true or false. Such signaling is a basic component of our participation in society, and even refusal to signal, e.g. wearing utilitarian clothing, can and will be interpreted as a signal.

The prominence of these various signaling methods changes over time, and each has a different set of side-effects, on account of which their total merit is decidedly unequal. Belief is the most dangerous category of signaling by a long way; hairstyles and bumper stickers, on the other hand, are fairly benign. For while some may have died from dressing in climatically unsuitable uniforms, and a few from wearing inadvisably long hair too close to heavy machinery, millions upon millions have died because they adopted harmful beliefs to serve the same basic purpose. Indeed, socially motivated adoption of harmful beliefs is perhaps the single most common failure mode for civilized humans. Thus it behooves us to encourage our society to signal through other means whenever possible.

When our social regime uses beliefs to signal, we suffer a strong pressure to pronounce, demonstrate, and hold the beliefs that are optimal for signaling rather than those that optimally represent reality, which causes our collective beliefs to shift away from the latter and toward the former. I call this pressure on belief signaling load. Of course, beliefs always play a signaling role to some extent, but the degree and the particular types of beliefs involved differ by time and place, and they both influence the magnitude of the signaling load. I'll illustrate with the following pair of examples. They're more florid than the previous; since I'm writing this essay between novels I hope you'll forgive me.

Imagine, dear reader, that you're a young Occidental in the second half of the 20thcentury. Music has at this time an outsized importance: rock and roll songs are everywhere, the big hits are a big deal, everyone you know is going to concerts and wearing their favorite bands' t-shirts as well.

In another day and age you might not have been musically inclined, but rock and roll is so ubiquitous that you come to understand and enjoy it well enough, and you too navigate the host of bands to find the ones you like, wear their t-shirts, and take girls to their concerts. You're planning to be a high-powered lawyer with a big salary one day, so you pick bands that are polished and popular. Nothing too punky or arty—those bands are for the kids who are going to be brewing your coffee after you make it big; and when you see someone in the wrong t-shirt you're thankful for the clear warning to steer clear.

Your musical signaling gets you in with the right crowd, it draws the attention of the right kind of girls and lets them know you're the right kind of guy, and in short it allows you to advertise your identity and group membership effectively and at minimal personal cost, all while enjoying some pretty decent tunes and funding the arts. It's a win-win deal that clarifies social groups marvelously, and it seems so normal to you that you can hardly imagine society working any other way.

Okay. Now forget all that, and imagine instead, dear readers—I know this one will be hard—imagine instead you're a young Occidental in the first half of the 21st century. By the time you were old enough to tie your shoes your neck was already permanently craning forward, but you don't mind, because everyone else is addicted to their phone too. Music is passé, although you're unaware of just how passé, because you have no memory of the previous century. These days social signaling is mostly happening online.

You have accounts with several social media networks. Some of these are set up for signaling with photos and videos, showcasing lifestyles which may or may not actually be lived by the narcissists pictured, while others are set up for signaling with small chunks of text known as “takes.” (“Takes” are expressions of personal beliefs or opinions related to the current topic everyone is discussing. They don't require any special knowledge, experience, or insight that would justify opening one's mouth in public, but resemble instead the effusions of whales who gather in one place to spout the same water to varying heights.)

Like your 20thcentury predecessor and everyone else, you know intuitively that you need to signal your group membership and identity so you can find the right crowd and the right girls; and you know intuitively that it's good to be popular. Since you're not quite handsome enough to share lifestyle photos of yourself surfing, you decide to post your takes instead. They come from the heart (sort of, maybe—well, actually you're not quite sure and it's not worth worrying about) but it so happens that they amount to paraphrases of already popular takes, signed under your own name. Not that you're intentionally copying, of course. It just ends up looking that way to an outside observer.

You don't have any special insight into whether the semantic content of these takes is actually true (who does?) but it feels right to post them, and lo and behold, your signaling helps to make you some friends. Your friends, naturally, express similar beliefs and congratulate you on yours, and everyone in the trust network you've joined echoes the same takes with small variations, each aiming to post the coolest and most popular expression of shared beliefs that are under no particular obligation to reflect reality. (I'll intervene in this story to tell you a secret, dear readers. Many of these beliefs are false! Can you believe it?)

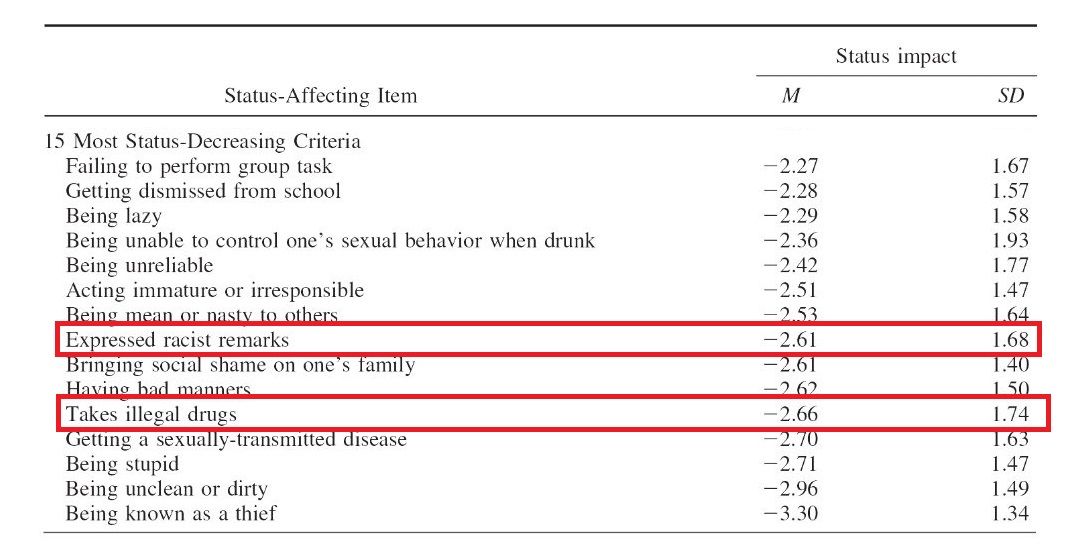

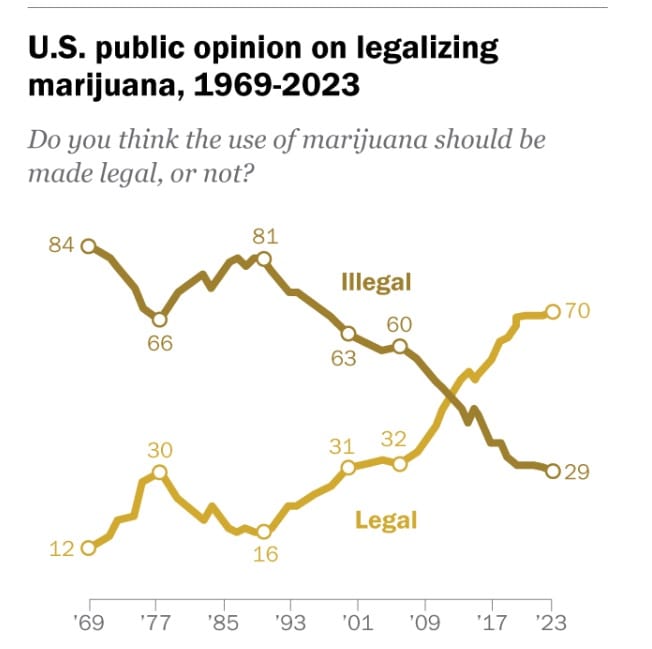

I don't normally trust this kind of survey research, but since it supports my theory, I'll trust it in this particular case. See how that works?

You have lofty aspirations, much like our ambitious lawyer from the previous century, but law isn't looking like such a great career these days, so you've set your eyes instead on investment banking (salaries start at $150k they say). You notice some social media accounts are advocating for offbeat, unpopular, and even taboo beliefs—beliefs not shared by the big or cool accounts, nor the accounts of model finance professionals you hope one day to emulate. You instinctively avoid following any of these losers. You're not even sure you'd let them serve you coffee, because if they found a sly way to sneak one of their unpopular ideas under your guard, you might say the wrong thing at a company party and fall into the same hole they'll end up in.

While it would be harmful to acknowledge, let alone understand, weirdos with socially unacceptable views, conflict is popular, and everyone enjoys blaming problems on a bad guy lest they have to accept the blame themselves. Happily you live in a democracy (yay) and there's a political party called the Purple Party ready to serve the purpose. The Purple Party is a big group of second-rate people with dumb ideas, though none quite so dangerous that you're afraid to recognize their existence. You know they're second-rate because none of the cool and popular people, nor the professionals you look up to, are willing to openly align themselves with the Purple Party. All the right people are in the Yellow Party. Perhaps you haven't spent any time thinking critically about the ideals or policies or actions of either party, but your friends and role models love to bash the Purple Party and anything associated with it, and in fact, they're such a functionally perfect outgroup that there's no reason to learn any more about them.

By default you assume that claims from supporters of the Purple Party are false, or at least so misleading they're as good as false, and you assume that claims from supporters of the Yellow Party are true, or at least so beneficent they're as good as true; and the less the Purple Party likes a proposal from someone on your side the better it must be, even if it seems rather bizarre and perhaps quite dangerous on first blush. Indeed, you assume all this so intuitively that the surface impression rippling across your consciousness is of a man gravitating toward the truth and speaking his mind, nothing more nor less. Thus your social signaling has integrated you and your friends into a wider trust network oriented partly, though not exclusively, around the friend-enemy distinction—perhaps the very trust network Tom and Dick were building up in our previous section.

Your counterparts in the Purple Party, of course, have done the same thing, and each trust network exerts a repulsive effect on the other, driving their beliefs in the opposite direction. Since you'll become more popular with the right people if you pillory the Purple Party, you pepper your posts with a pickled peck of just such pillorying. You do it with moderation though: you're aiming to be a respectable salaryman, not an agitator. And it works perfectly. All the right people like you more, and the wrong people are powerless losers anyway—though you've figured out that it's standard practice to pretend they're powerful in order to assign them a greater share of blame than would otherwise be possible, and to portray you and your friends as plucky underdogs, which is, after all, kind of cool in TV shows and not a frame you'd be willing to cede to the other side.

In short, it's obvious to you that studying any of the sociopolitical issues underlying your beliefs in depth would be a total waste of time. You're just playing a signaling game—and you're winning. You carry the winning you learned on social media over into real life too. When you're chumming with other suits in the finance industry over drinks you profess the same beliefs and mock the Purple Party in the same way. They eat it up, because it confirms you're one of them. Success will soon be yours; you only have to sit down and stretch out a knee—just like that—and an aspiring trophy wife who's also learned to adopt the right beliefs will sit on it. The world is your oyster.

I hope you found these two stories entertaining, dear readers. They're admittedly a parodical, oversaturated, high-contrast version of reality, but not so unfair and exaggerated that you'd be justified in dismissing them. Millions of people operate in essentially the same way as our example signalers, but lack the capacity to reflect on their own behavior in such excruciating detail.

Without indicting absolutely everyone, I'll also mention that many self-identified moderates who aren't keen to firmly take the side of one party or another are still happy to jump in on particular issues (those with lower stakes and a clear majority position reducing the potential for blowback) where they play out the very same game without appreciably greater probity: moral grandstanding at the expense of preapproved scapegoats, staged safely within the bounds of conventional wisdom. Others remain silent but listen from the sidelines well enough to know which way the wind blows, and the right takes echo in their brains and shape their opinions even if they choose not to repeat them aloud. Certainly some people resist the whole charade, but not enough people; and because they don't play the game, their visibility in the social media ecosystem, and hence their impact on society, is too small to shift the balance of mass opinion.

The two parallel examples above display two different signaling regimes with the same primary function. Yet the first regime has a low cost to society, and perhaps even positive externalities (someone has to fund the arts, right? right?), whereas the second regime entails significant negative externalities on account of its high epistemic load.

I'll explain. Under the rock-and-roll signaling regime, music is serving two functions. The primary function is to signal personal characteristics and group membership. The secondary function is to provide aesthetic enjoyment. These two functions are imperfectly aligned (the best music and the music most effective for signaling are not the same), but little harm comes from the misalignment (financial incentives will favor signaling efficacy over aesthetic quality, but this is more of an irritation to musicians than a serious harm to society at large). For a discussion of the problems caused by signaling with aesthetic opinions, you can read my essay Against Good Taste. But the point that's relevant to us here is that under this signaling regime, the signaling load affecting general knowledge is low.

Now, under the social-media signaling regime, professed beliefs are again serving two functions, and the primary function is again to signal admirable personal characteristics and group membership. But this time the secondary, almost vestigial function is to represent reality and thereby enable society to make good choices. Remember that the typically selfish and unreflective individual signaler is motivated by the primary function, and pays the secondary function only the minimum attention that allows him to maintain the appearance of caring, and sometimes not even that. Because the two functions are poorly aligned and the secondary function makes a large impact on collective prosperity, this signaling regime has potentially enormous negative externalities. Yes, I'm referring to mass adoption of wrong or even outright crazy beliefs—the disastrous consequence of high epistemic load.

You might object that no one really believes the things they profess to believe for the purposes of signaling. If only this were the case! We've already pointed out that direct knowledge is often unavailable, so that ad hominems are the only means even the most intelligent people have of distinguishing true claims from false ones. Because these necessary ad hominem judgments are largely interpretations of signals, the beliefs that make for good signals also make their proponents seem more trustworthy—and because they emanate from these more trustworthy-seeming people, they're also likelier to be taken as true.

But that's not all, dear readers. Trendy false beliefs typically sound better than the truth, so they're very tempting to the ignorant. Educators seem to be the kind of people who are particularly enamored of them, and kids scarf them down like candy from strangers—their parents rarely bothering to warn them away. When they do accrue more experience they're slow to correct their errors. And for many adults too it's psychologically easier to make professed beliefs into genuinely held ones: by so doing they gain an advantage in public discourse, since they don't have to fight through the friction of subjectively felt dissonance when they're speaking. Natural liars and natural fools enjoy a similar advantage. In fact, the belief signaling regime is a dream come true for sociopathic status maximizers of all stripes, who extend the competitive lead over naive altruists they've enjoyed ever since village size exceeded Dunbar's number and cousin marriage was banned out to the seventh degree. Some of them are reading right now, laughing to themselves that Sanilac is wasting time combating socially advantageous lies on the meta level while they're doing more productive things, like making money and donating a tax-deductible fraction of it toward the advancement of animal welfare to price their lessers out of red meat.

Low quality people convincing themselves to believe the false claims they use to signal their high quality isn't the only problem. As the situation worsens, respectable public figures begin to shun the truth, so that when intelligent and upright citizens who aren't unnaturally absorbed in the relevant issues (that is, the vast majority) look to them for guidance, they're misled; before long lies become the conventional wisdom in polite society. Once false beliefs gain such social preeminence that it's taboo to refute them with the truth, public reasoning is obliged to take the lies as premises, and thus forced toward faulty conclusions one cannot refute honestly in the public square—even in cases where “everyone knows” they're wrong. Whence an odd phenomenon one observes from time to time like the aurora borealis of a society frozen by epistemic darkness: to promote the public good, well-intentioned advocates attempt to “refute” these faulty conclusions with dishonest arguments of their own, having been barred from presenting the honest arguments against them precisely because they depend on taboo premises! This never works very well, nor at least very long; and in the end, unprincipled exceptions are ferreted out and important public decisions are made on the basis of false beliefs even if everyone does know they're false. Sometimes these include the foundational assumptions of a nation or culture, setting future generations up for serious trouble.

In short, social signaling with false beliefs genuinely degrades knowledge and creates problems that can't be blithely waved away just by saying, “No big deal—it doesn't matter if people keep saying that, because everyone knows it's not actually true.”

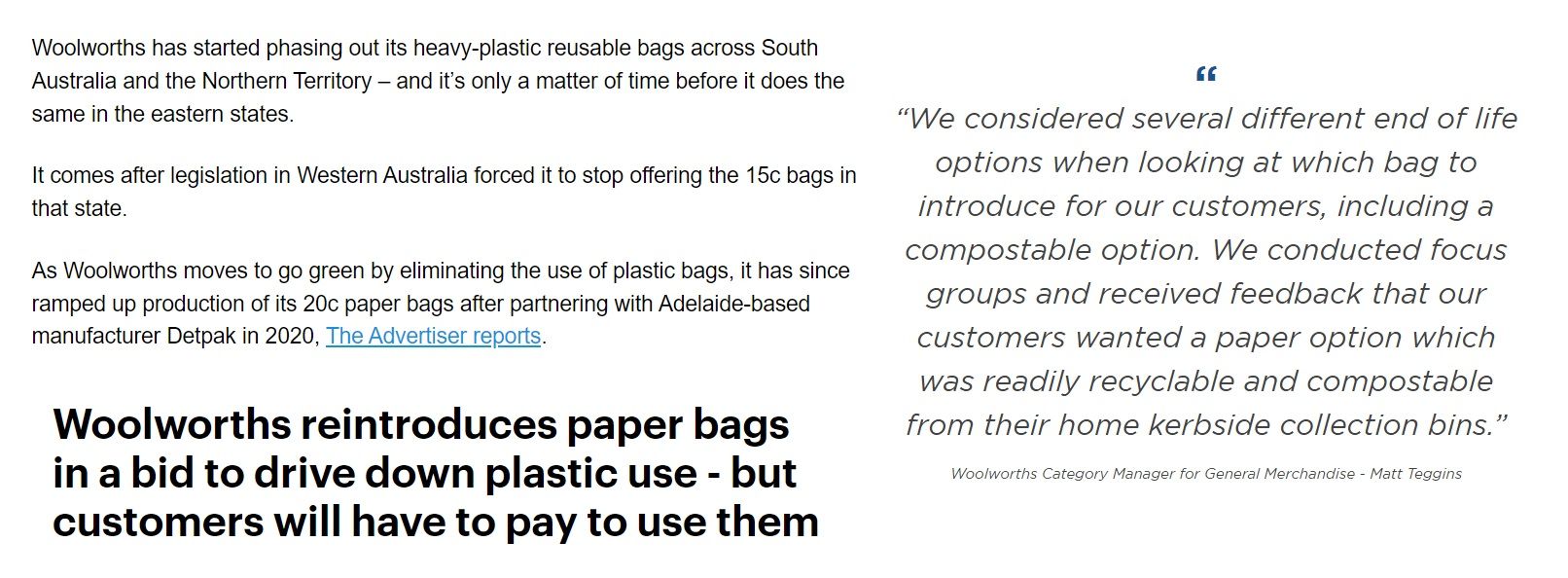

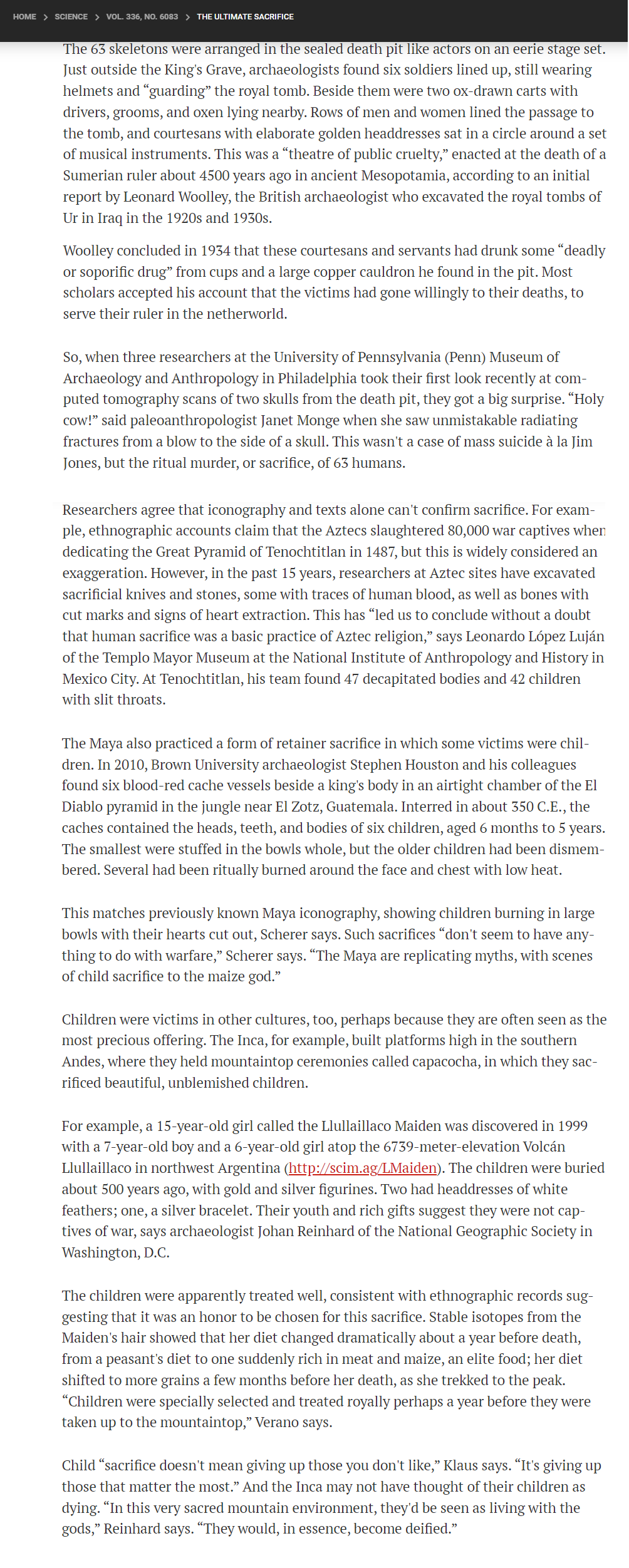

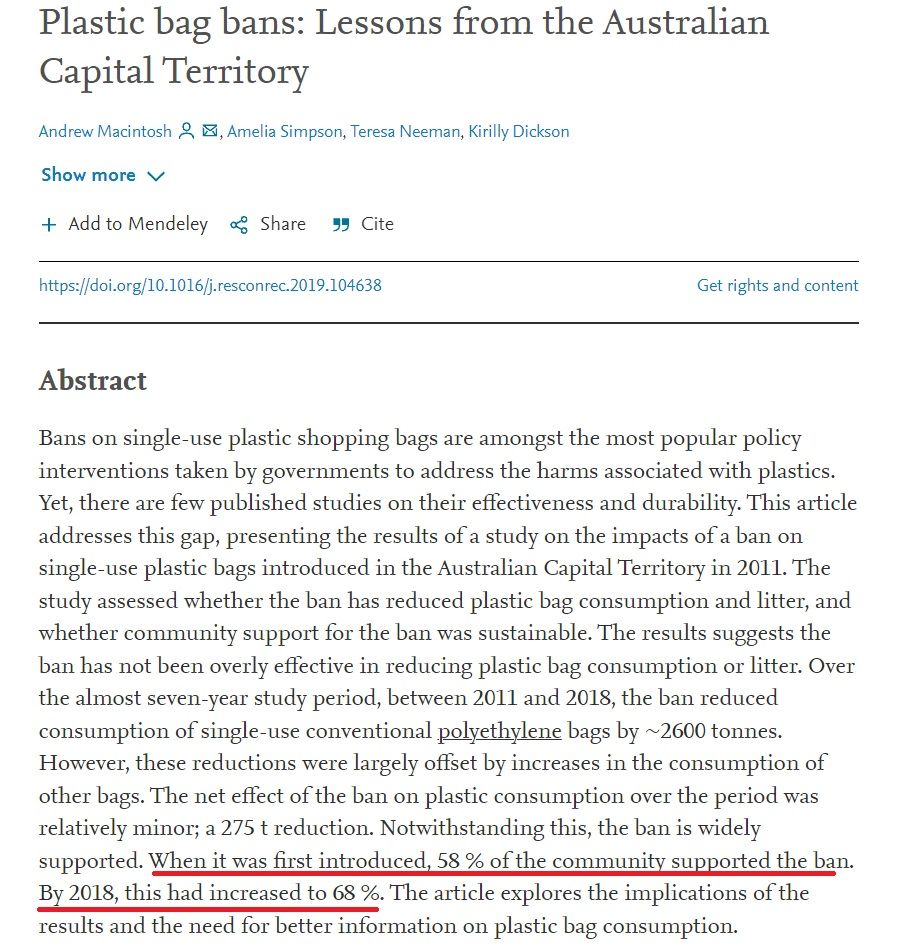

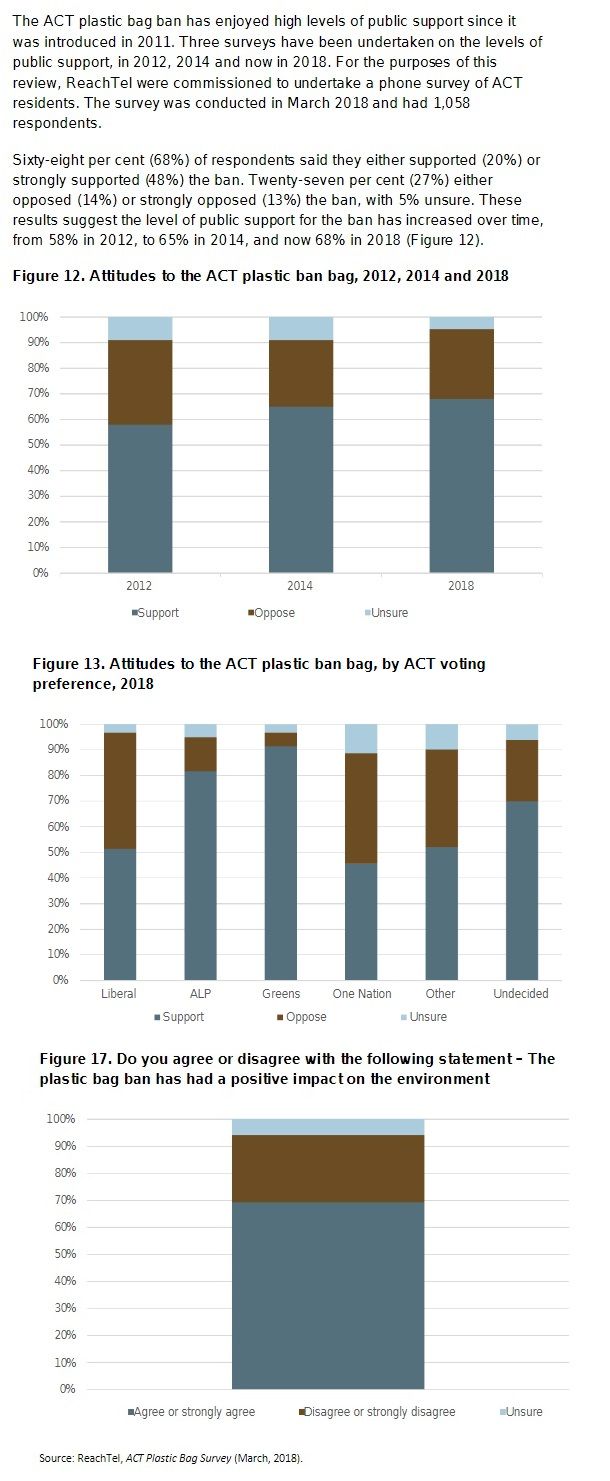

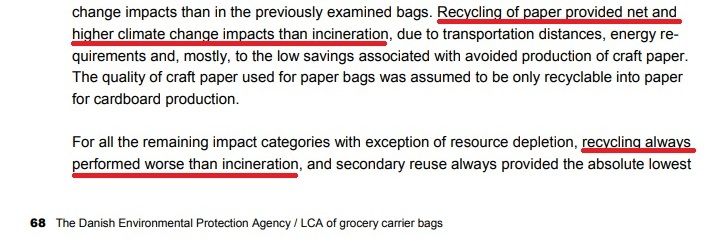

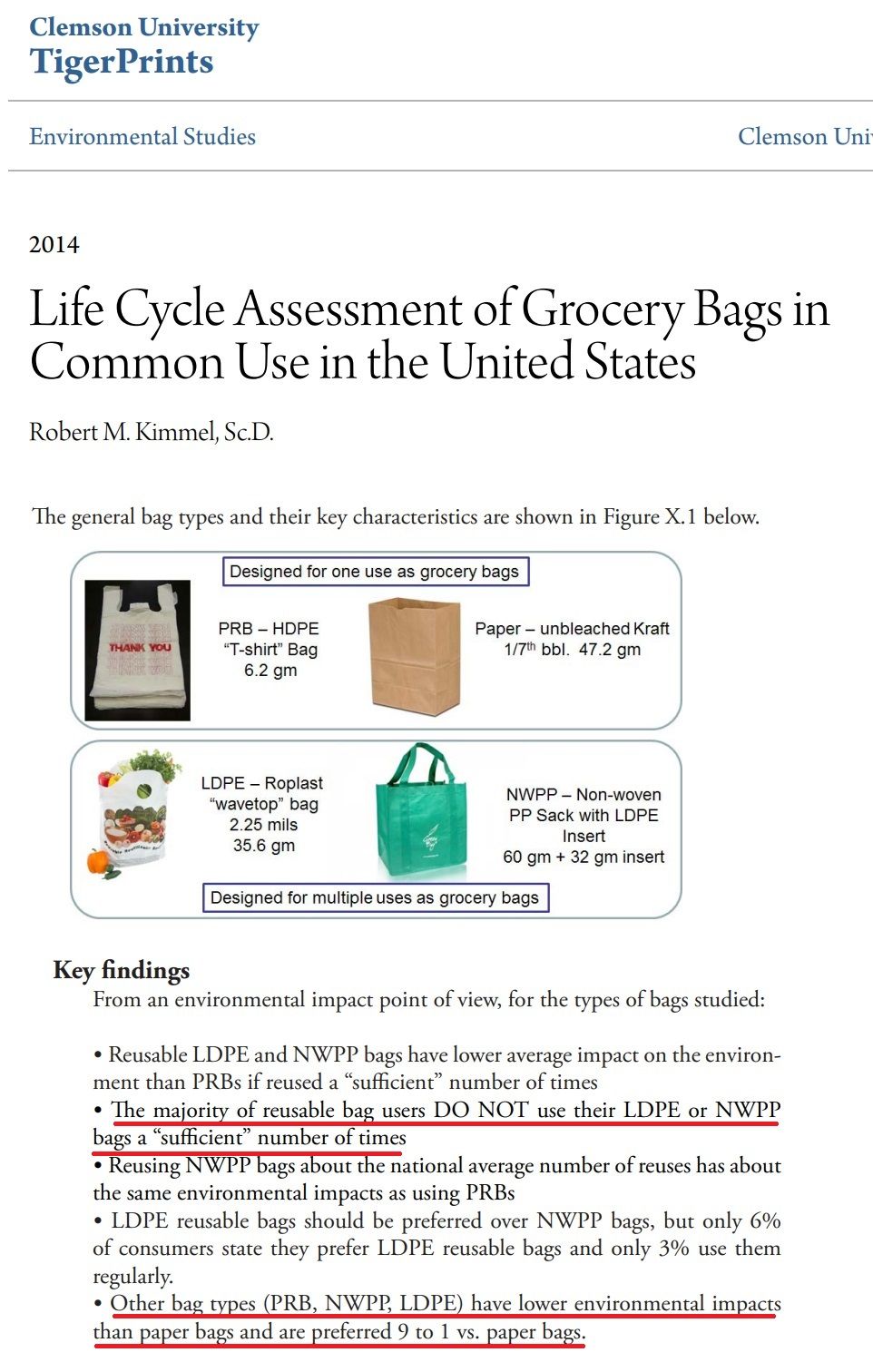

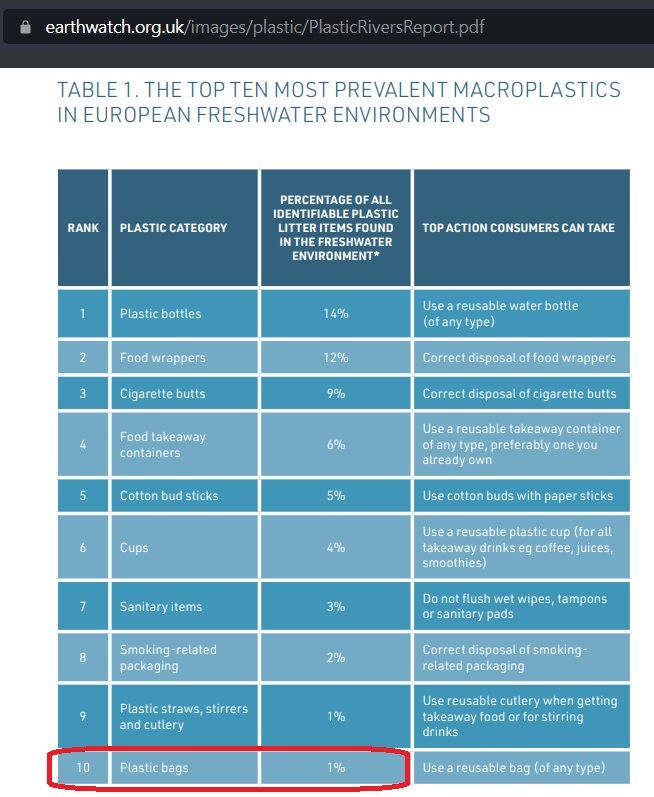

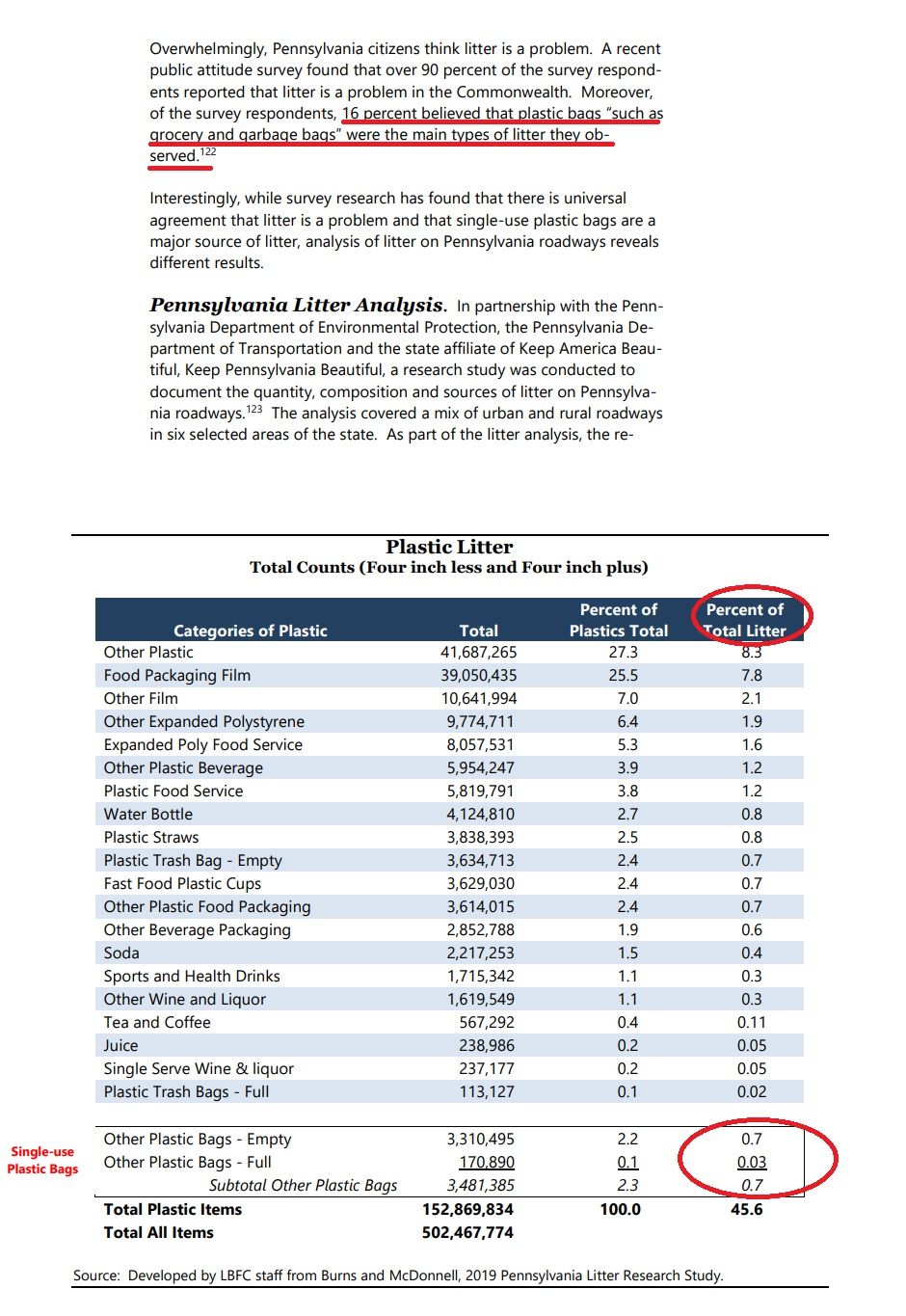

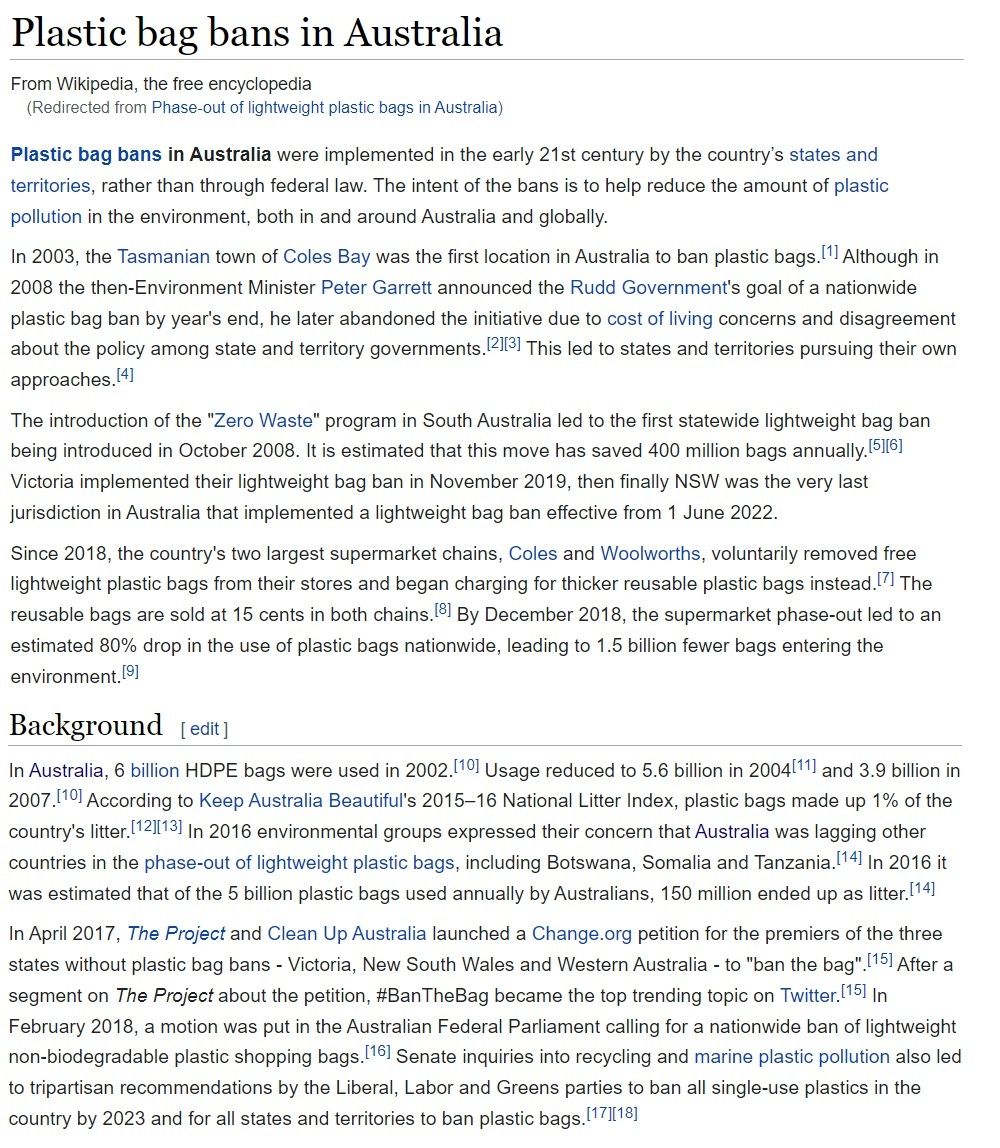

Now let's consider a more concrete example of signaling load: bag bans. They're only recently instituted and already a classic example of virtue signaling. I'm sure you're familiar with this concept. While real and useful, it's sometimes overused as a catch-all way of criticizing any virtuous behavior that could have dubious motives, even if that behavior might be genuinely virtuous, or simply have good effects. This criticism is misguided, because the most important element of beneficent social engineering is to align publicly recognized virtue with genuine virtue in order to maximize pursuit of the good. Be that as it may, our aim here is simple. We're just going to observe the effect virtue signaling has on collective beliefs.

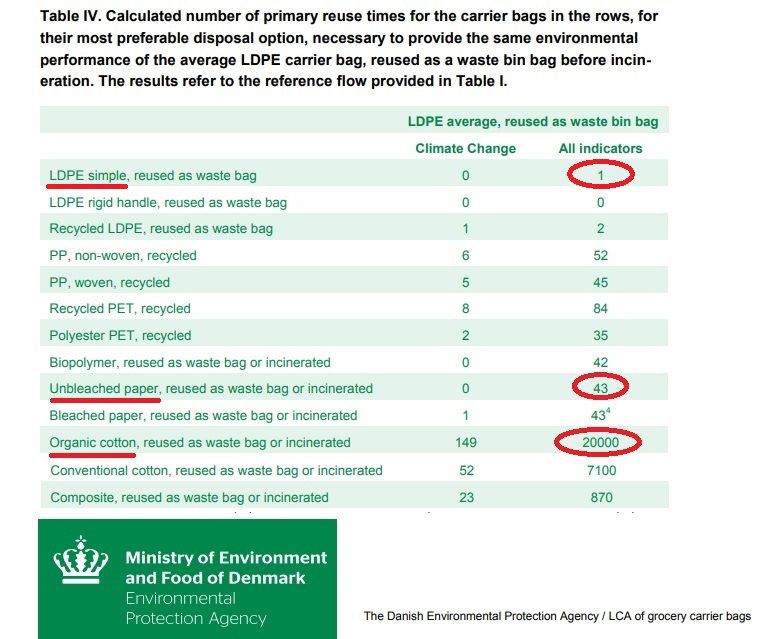

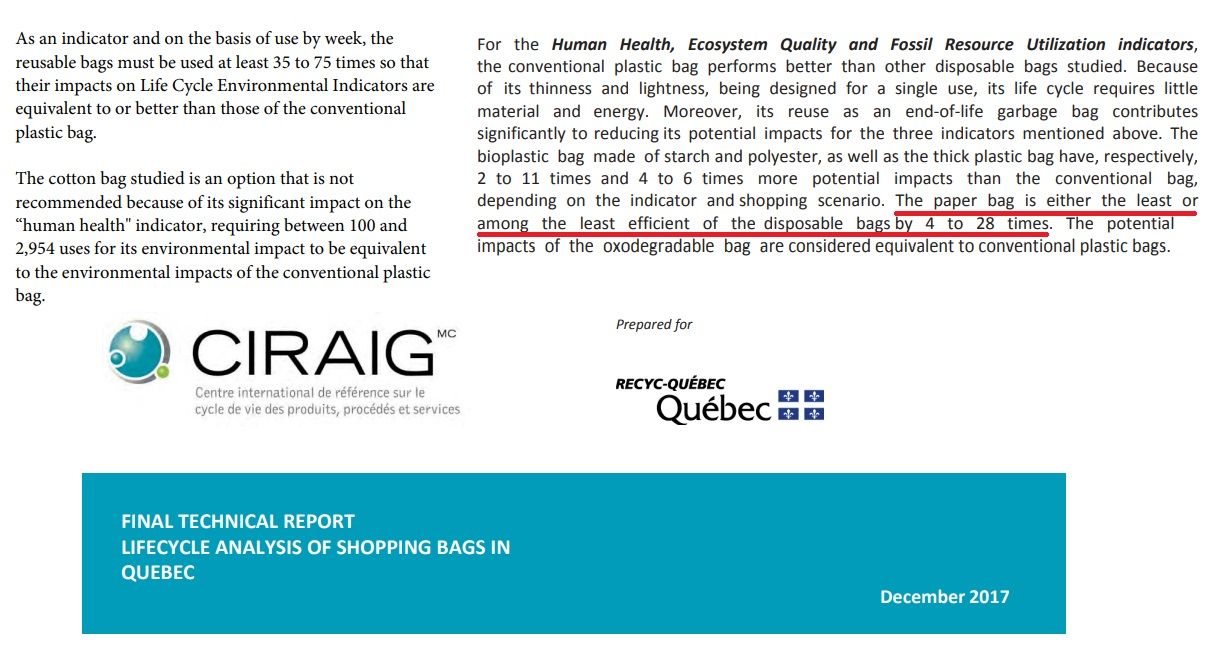

I'd like you to imagine, dear readers, that disposable plastic bags are better for the environment than paper bags, but most people aren't aware of this and don't have time to read research demonstrating it. And suppose paper bags seem/feel/sound more natural and therefore better for the environment (it's brown like tree bark and also kind of rough!) even though the reverse is true. And suppose further that “saving the environment” is the most publicly recognized locus for demonstrating care. In such a scenario individuals will draw a personal advantage from proclaiming that paper bags are better than plastic, spreading misinformation to this effect, and even supporting a campaign to ban the latter, creating the impression they're virtuous people motivated by high morals and concerned with the public good. If their campaign starts to take, many respectable public figures, who know well when to catch a rising tide, will join the chorus denouncing plastic.

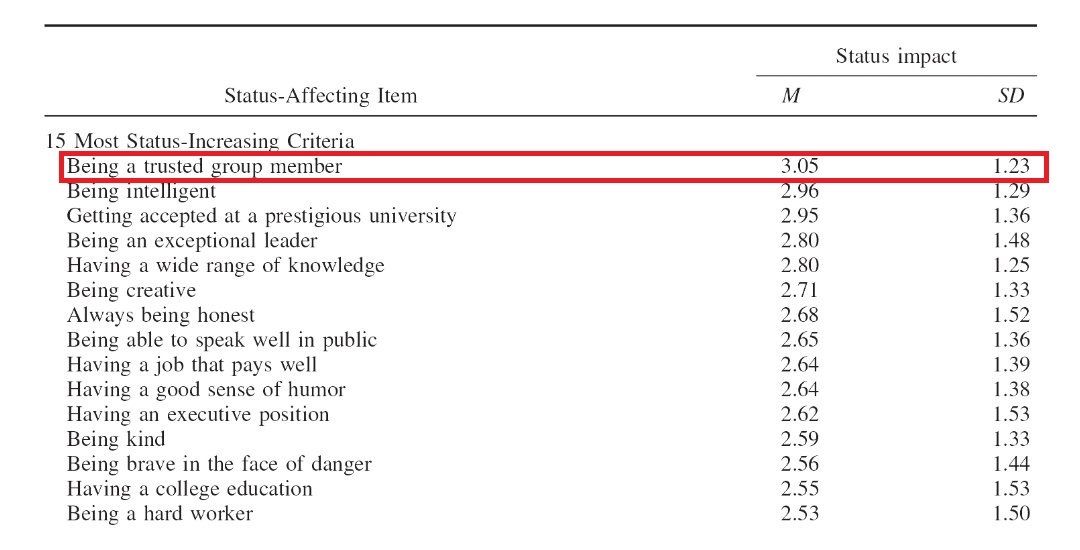

For the most ambitious social climbers even paper won't be a sufficient signal of how virtuous they are. Only reusable organic cotton bags will do. Sadly, while reusable organic cotton might send the most impressive signal, research shows it's actually the worst choice for the environment by a wide margin. Still—are you going to let yourself be caught with a cheap disposable plastic bag when all your friends are toting their locally grown kale home swaddled in organic free-range fair-trade cotton? Of course not. You'd look like the wrong kind of person—your status as a trusted group member would decline precipitously; and when you brag about the environmental benefits of your new hemp hiking boots, they wouldn't take you seriously. (Strangely, no one ever proposes to ban reusable organic cotton bags. If they really did care about the environment and wanted to go around banning things, they would.)

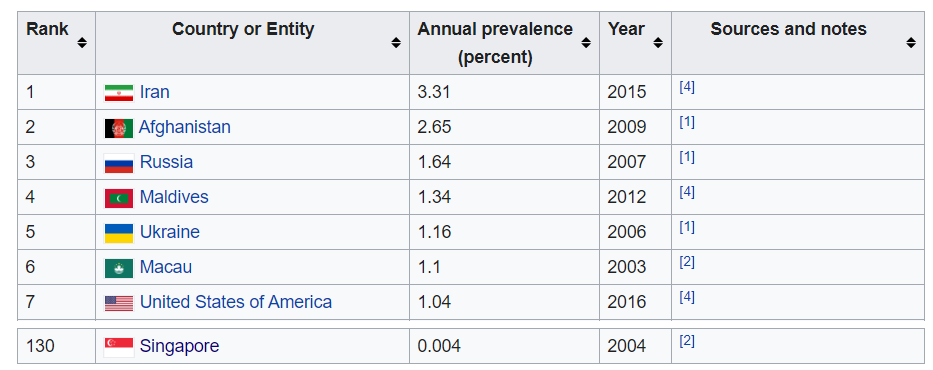

Paper and organic cotton are respectively 43x and 20,000x worse for the environment than plastic bags, according to the Danish EPA.

I spent about eight hours looking into the research behind plastic bag bans for the sake of these few paragraphs, and even such a substantial waste of time wasn't enough to clear up all the relevant ambiguities. It's possible, dear readers, that I've still overlooked some essential subtlety and drawn the wrong conclusions—in fact, maybe you shouldn't trust me! But the average voter won't spend eight seconds. He'll make a quick ad hominem judgment about the ban's proponents, whereupon their display of good intentions, bolstered by natural intuitions about words printed in green (green!) ink on rough brown paper and the sheer number of respectable people who seem to agree, will persuade him to believe the claims. Mainstream support will build into an avalanche, and retailers will loudly join in to make the inevitable a marketing opportunity. Eventually almost everyone will say and believe that paper is better than plastic—albeit not quite as good as reusable organic cotton.

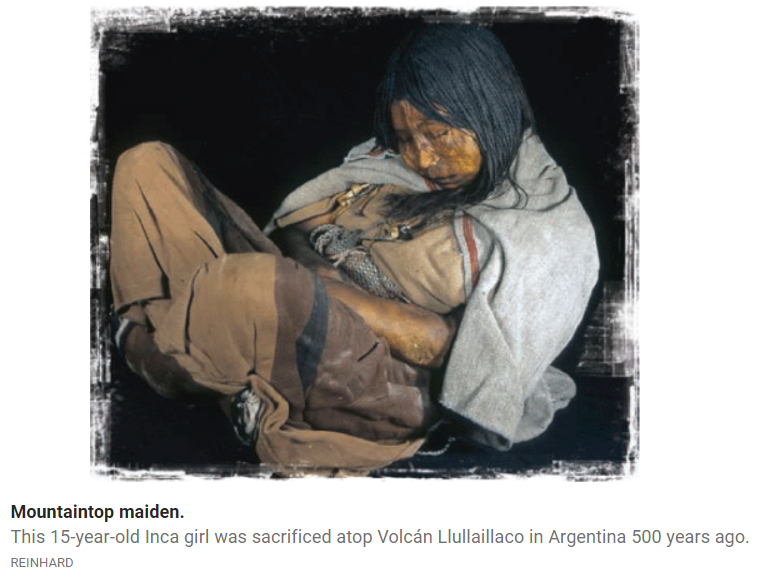

In short, the initial conditions generate a signaling load that gradually pulls the whole trust network into falsehood. These shared delusions lead in turn to harmful policies and behaviors which, much like child sacrifices and circumcision, have the curious effect of reinforcing the delusions; for it's psychologically difficult to countenance the possibility that you and everyone you know could have made or enforced sacrifices which in the best case serve no purpose at all, and in the worst are both destructive and completely retarded. Those who've sacrificed in vain seek out justifications for the unjustifiable to avoid the anguish of regret, and one can assume a weighty initiation sacrifice would improve cults' member retention markedly.

Sacrifices reinforce both group membership and the collective beliefs that demanded them, even if those beliefs are false.

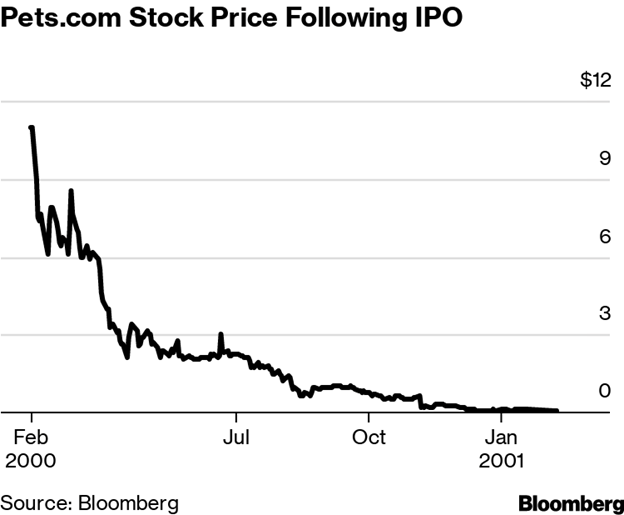

Public belief in the value of bag bans has increased since they were first enacted even though the evidence against them has grown stronger.

The example of bag bans is instructive for several reasons. First, our intuitions about environmental impact are here directly opposite to actual environmental impact. Reusable organic cotton sounds the best, but it's the worst; disposable plastic sounds the worst, but it's the best; paper sounds better than plastic, but it's not as good. Since the beliefs optimal for signaling—those that flatter our uninformed intuitions and present us to others as caring and self-sacrificing—are very far from the beliefs that accurately reflect reality, they produce an especially high signaling load.

Second, there's a feedback loop that further augments this signaling load. At first just a few brave pioneers leverage human intuitions about brown paper, organic cotton, and reusability to show care and win status points. When this works, more people join in, and by consequence the faulty intuitions begin to seem more reliably true to those watching from the sidelines, who make ad hominem judgments based in part on crowd size (simple rule: “many people saying the same thing are more trustworthy than one”). This encourages others to join the crowd, causing the faulty intuitions to seem more true, causing the signaling to be more effective, causing more people to join the crowd, etc., etc. If momentum is strong enough, eventually most people within the trust network believe the falsehood, and that falsehood becomes the default assumption even for smart and inquisitive people who haven't yet taken the time to inquire at length. Dissent is then punished with a loss of status points that locks in the lie, because as the false belief seems truer, dissenters seem both even more wrong and even less caring, and therefore lower status—and therefore even more wrong! This cascade effect is one reason why a dubious idea might take hold in one society but not another: small variations in the early stages may determine whether or not an avalanche occurs. (Note that the process we've delineated here can also launch true ideas to prominence, provided luck favors them with a signaling advantage.)

You're about to incinerate your paper bags to save the environment. But your uninformed neighbor is watching with a disapproving gaze and twitching his eyebrows in the direction of your recycle bin. He thinks you're a bad, uncaring person. What will you do?

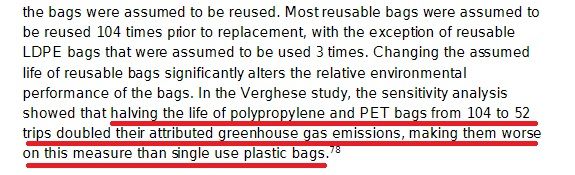

Incidentally, the performance of reusable plastic bags varies enormously depending on the number of times they're reused, and studies vary further in the number of reuses they consider to be a necessary minimum. Advocates for one side or the other can easily portray them as better or worse than disposable plastic bags by assuming whatever reuse numbers suit the conclusions they want to draw, whether these are practically possible or not, leaving them great leeway to mislead the public without lying directly. Take a moment to consider, dear readers, just how many opportunities there must be to lie about more complicated issues if it's so easy to create confusion about grocery bags! And in truth even this debate is disingenuous, because if the extra cost of reusable bags—estimated at 5$ per capita by two independent studies—were simply levied as a direct tax and earmarked for environmental cleanup, we would attain a greater benefit with much less daily annoyance. But of course, such a tax would be useless for signaling—defeating the true purpose of the ban.

Whatever the truth turns out to be, both the economic inefficiency and the environmental harm caused by choosing the wrong grocery bags (quite insignificant) pale in comparison to the potential consequences when signaling load affects the collective beliefs relevant to issues with higher stakes and greater inter-group acrimony. I'll leave it to you to imagine what those issues might be.

Sadly the whole debacle is quite real. No, plastic bag litter doesn't justify the ban.

Let's summarize before we move on to the next section.

Humans use declarations and demonstrations of belief as social signals that increase their status and perceived trustworthiness within their group. Using beliefs as social signals interferes with their other function, namely to represent reality, by pulling them away from reality and toward the optimal signals instead. This signaling load isn't just a plebian bias we can easily shrug off; it affects smart and open-minded people too, and the divide between privately held and publicly declared beliefs isn't wide enough to nullify it. That's because, as we established in Part I, most of our knowledge actually derives from ad hominem judgments in one way or another, and ad hominem judgments are profoundly influenced by signals of character, status, and group membership. Signaling load degrades our picture of reality, sometimes severely, and this degraded picture of reality leads to poor choices, which lead in turn to poor outcomes.

Signaling load isn't the only force degrading collective beliefs. In the next section I'll examine a second force I call partisan load.

C. Let's Kill A Virtuous Man (Partisan Load)

Imagine, dear reader, that you're holding a gun. You come upon a brawl between a known local blackguard and a visiting tourist of evidently respectable character. Their struggle is so vicious that one of them will surely die whether or not you intervene. Both combatants shout, “Rescue me! Shoot him!” Whom will you shoot? The blackguard, of course. Now imagine the same scenario, but you're at war. The gentleman is a brave enemy soldier; the blackguard is one of your countrymen, whose second-degree murder sentence was commuted so he could be pressed into the army. “Rescue me! Shoot him!” Whom will you shoot this time?

In each case you make an ad hominem judgment. But your answer changes, because as an inter-group conflict intensifies, the weight you give to the friend-enemy distinction increases at the expense of character traits like honesty, good judgment, and uprightness.

The same pattern applies in the realm of knowledge. Ad hominem judgments of friend and enemy work as a kind of epistemic immune system, exerting a protective effect in circumstances where our enemies might want to deceive us. The price for this protection is a relative reduction in the weight we give to good character, which during harmonious times is more closely correlated with reliable sources of information. Even if it's beneficial during a conflict, partisanship can never lift our ability to learn new truths above where it stands in peacetime; it rather impairs knowledge production and degrades the accuracy of beliefs for all factions, and thus society as a whole.

There are, however, exceptions to this analysis. Curiously, the net knowledge degradation caused by inter-group conflict isn't directly proportionate to the level of inter-group violence. This is because a serious threat, like a war, tends to focus the mind on realities relevant to surviving that threat; whereas when the stakes are lower but blood runs high, posturing to win intra-group status competitions has a more favorable cost-benefit ratio. Further, when tensions are low, excessive displays of partisanship are selected against, because they reduce the perception of trustworthiness. One sees here that the brew of factors impacting ad hominem judgments is very complex and impossible to model fully in a conceptual analysis—yet we intuitively understand these complexities in the context of narrative examples and real life.

Antipathy between conflicting trust networks doesn't just deemphasize good character. It also encourages doubt, paranoia, and aversion to the claims of perceived enemies—even when they're true. This partisan load takes the form of a repulsive force driving the beliefs of conflicting trust networks in opposite directions. “The evil enemy believes X, therefore I believe not-X.” In its most extreme forms one can witness grown men playing Opposite Day like small children: “The evil enemy believes X, therefore I believe negative-X!”

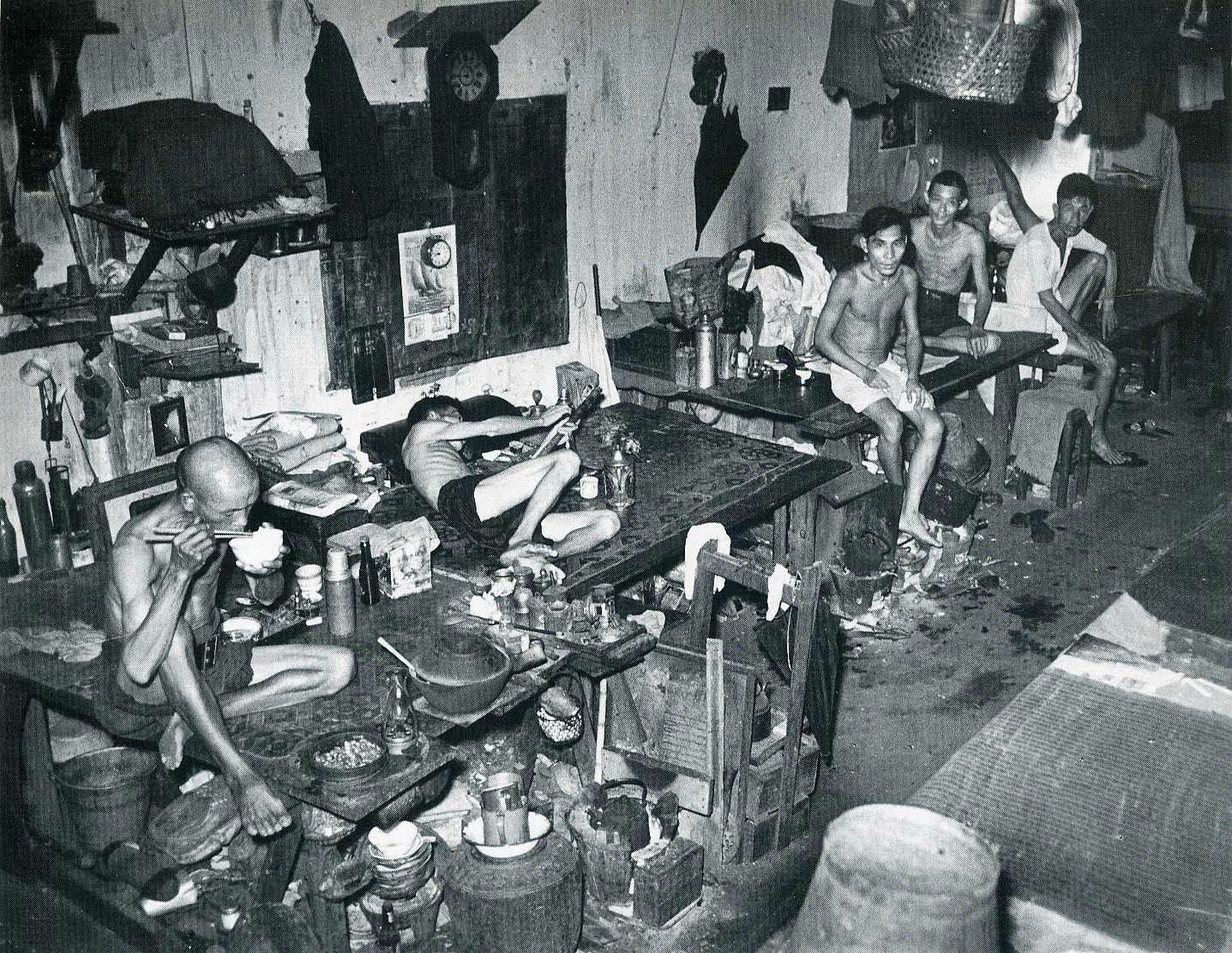

AIDS denialism in South Africa is an especially striking real-world example of the harmful effects of partisan load. In the 1990s and early 2000s, paranoia about racism and colonialism led the South African government to reject the Western scientific consensus that HIV causes AIDS and assert that the medicines developed by Western institutions to treat HIV were harmful rather than helpful. Denialists were able to trot out a few credentialed dissidents who supported these views to give their case the appearance of legitimacy and paint the medical establishment as corrupt. (N.B. dissidents are not always right, even when the system is broken.) Indeed, some South Africans believed Western medicines were intended to kill. If this seems farfetched, consider that vaccine skeptics in the West expressed a very similar range of sentiments in the polarized political climate surrounding the COVID epidemic, with the most extreme asserting that the new mRNA vaccines were part of a vast conspiracy intentionally aiming at mass murder or sterilization.

Denialism resulted in over 300,000 preventable deaths in South Africa between 2000 and 2005. While it's tempting to ascribe such a disaster purely to stupidity, long known to be the most powerful force guiding the behavior of human groups, this wasn't the only cause. It happened because the kind of ad hominem judgments we're all forced to make became heavily weighted toward the friend-enemy distinction in the context of an inter-group conflict, enabling the spread of paranoiac skepticism about information provided by a purported enemy. In short, it happened because of partisan load. While the death toll may not always rise so high, we see the same game play out whenever knowledge impacts divisive issues.

In the early days of the COVID epidemic, inter-group tensions pushed partisan load to the point of absurdity, degrading the quality of collective knowledge even on seemingly simple issues. The shift in beliefs about masks is especially instructive. (Perhaps my memory of these events is imperfect, but I've no wish to relive them by digging up old social media posts to confirm the story, so you may consider this a mere fable if you like.)

Under the direction of prominent members, one side, which we'll call Trust Network A, initially formed the collective belief that masks wouldn't help to reduce the spread of the disease. The other side, Trust Network B, had been primed by the high acrimony and antagonism of that year to doubt any position taken by Trust Network A, and believed to the contrary that this was a lie invented to cover up a shortage. Trust Network A vociferously denied this accusation. The advance guard of Trust Network B, always keen on self-reliance and preparedness for apocalyptic disaster (traits they fetishized to feed their never-to-be-realized dream of return to a Wild West where nature could be relied on to reward individual ability over parasitic social finesse) even began sharing recipes for homemade masks on social media.

And then, dear readers, before these recipes could spread from the fringes to the inattentive heartland of Trust Network B, Trust Network A flipped its position. Now Trust Network A collectively decided that masks did slow the spread of the disease, and promptly forgot its original position. It even jumped past this, and declared further that the very recently dismissed masks were so effective they should be mandatory! Thereupon Trust Network B, like a raging bull who'd not only seen red but was also being prodded from behind by spears, reversed its position too—and declared that masks do not, in fact, actually work. Not only that: some members of Trust Network B went further still, and declared that masks were harmful—that they were even worse than the disease they were intended to prevent! (Did they copy this playbook from the South African government, or was it just a happy coincidence?) When Trust Network A heard these refusals it was fired with antagonistic rage, like a skunk driven out of his burrow by wild dogs. Its members redoubled their insistence on mask mandates, demanded fresh mandates on other matters besides, and began advertising their masked faces as signals of epistemic solidarity—never mind that they'd all held the opposite view just a short while prior.

The question of whether masks do or don't reduce the spread of disease is a straightforward and objective one. There is, dear readers, only one right answer. But the bizarre reversals I've just described cannot be explained by a story of independent actors researching a question directly and arriving somehow at different conclusions. It's quite clear that the beliefs of both Trust Networks A and B were motivated by partisan load—which caused them to trust friendly sources without skepticism and reject enemy sources without consideration, even to the point of playing Opposite Day. Of course, since the question of whether masks help is as simple as X or not-X, partisans of one side ended up being correct (for our purposes here it doesn't matter which, and I won't venture my opinion). A tiny number of people may have even examined the available information with enough care to determine the right answer. Nevertheless, the great majority of them were only correct by accident, and deserve no more credit for the right answer than the Queen does when a flipped coin shows heads.

I should pause here to disabuse you of the mistaken notion that centrist, moderate, or neutral parties don't participate in trust networks and aren't subject to partisan load. Recall first that trust networks aren't closed containers, but chaotic webs which often lack clearly defined boundaries. Centrists are tied into these and have their own sets of trusted and distrusted sources. They are, moreover, subject to partisan load, but in their case it takes the form of forces repelling them from the extremes and pulling them toward the center—regardless of which beliefs are true or false judged on their own merits.

It's typical for centrists to lazily adopt the attitudes that allow them to minimize friction and get along with everyone. When it comes time to signal, they vigorously signal their normalcy, which amounts to a regurgitation of the acceptable opinions inculcated by the laugh track of late-night comedy television (designed for this purpose). So the idea that centrists are by nature the intellectual superiors of extremists isn't remotely true. Finally, even the most neutral parties still need to join sets of mutually trusting sources and engage in signaling in order to build an accurate picture of the world and function in human society (and as I'll explain in Part IVC, their relative indifference to partisan conflict is not necessarily so wise as it might appear). Thus the epistemic critique of partisanship in this section should not be read as a denunciation of taking sides as such, but as an admonition to subject your beliefs to the appropriate scrutiny if and when you do, in order to minimize the inevitable distortion.

Now I'd like you to consider, dear readers, a worst-case scenario for collective knowledge. Imagine you're living in a society split into two highly antagonistic trust networks that fight not on the honest, material plane of physical war, but on the slimy, smog-ridden plane of ideas. For signaling your society relies largely on demonstrations of belief that show allegiance to one trust network or the other. Individuals who are more afflicted by partisan and signaling load and thus further from reality enjoy more influence, visibility, and power than than the average person, and far more than is justified by the accuracy of their views. This applies to the intelligent as well as the stupid, because intelligence and resistance to epistemic load are distinct and poorly correlated traits. Indeed, smarties who are deluded or deceptive produce sophisticated rationalizations that can do greater harm than simplistic ones which are more easily seen through.

Does this sound familiar? It's a fair description of Occidental society in the age of social media.

Let's summarize before we move on.

Antagonism between conflicting trust networks causes their members to weight the friend-enemy distinction more heavily than other elements of character when they make ad hominem judgments, and to distrust and even invert the collective beliefs of enemy trust networks. I call the distortionary pressure this exerts on beliefs partisan load, and although it offers some protection against deception, it has the net effect of degrading the accuracy of collective knowledge relative to what it would be in the absence of conflict.

D. Let's Fight A Dirty War (Epistemic Load)

So, both signaling load and partisan load degrade our knowledge. They also overlap with and amplify each other, and their combined effect is more than merely additive. I'll call the sum of all forces inducing knowledge degradation epistemic load. For reasons I'll discuss further on, some forms of social organization induce a higher epistemic load than others, and some realms of knowledge are inherently prone to a higher epistemic load than others (the hard sciences are relatively, but not entirely, immune).

Signaling load and partisan load aren't the only forces that contribute to epistemic load, but their effects on collective knowledge are the most complex and the most worthy of extended analysis. One could easily define others and produce a fairly conventional list of self-explanatory biases. I'll mention a very simple and familiar one in passing: incentives. Men tend to believe things they're incentivized to believe, whether or not they're true. Homeowners, for instance, usually believe it's good for society when the cost of buying a house continually increases, even though this is manifestly false. The best solution is to turn this negative into a positive by incentivizing correct beliefs. E.g., paying people for good outcomes or correct predictions, as in betting markets. Unfortunately it's often impossible to do this, and instead false beliefs or at least false professions of belief are incentivized in practice.

I'll illustrate the combined effects of signaling and partisan load with a recent example. Before I continue, please remind yourself that our purpose here is only to understand how people form their beliefs. For this reason we're once again not interested in which side is correct. To help you set aside your opinions about the issue I'm going to write in the past tense, as if I'm telling a story. (If you think you can discern my own opinion from the following text, you're wrong.)

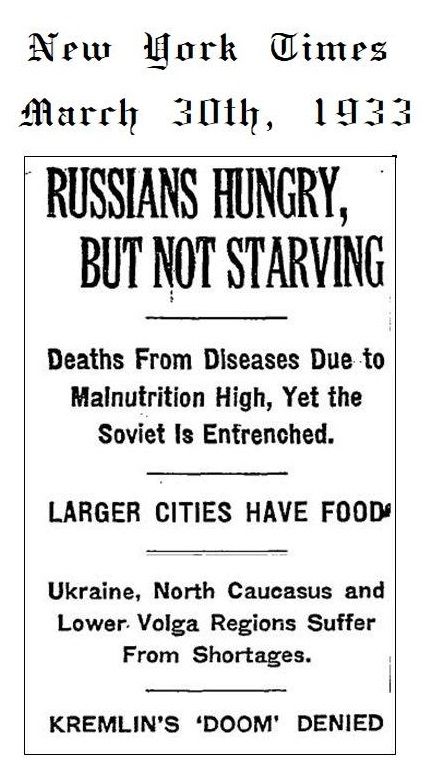

The two sides in the anthropogenic climate change debate of the late twentieth and early twenty-first century were often incorrectly portrayed as “pro-science” and “anti-science.” While there were some genuinely anti-science doubters, the true source of disagreement about climate change was not the validity of scientific method. What doubters were doubting was whether the people and institutions carrying out the method could really be trusted. Internal consensus isn't irreproachable proof of trustworthiness, because partisans (like Tom and Dick from our earlier academic example) can potentially promote their allies and squeeze out dissenters until institutions are dominated by a single unified trust network telling the same slanted story.

The critical point omitted from virtually every discussion of the issue was that detailed climate models are simply too complex for the lay public to understand or verify. It's fundamentally impossible for politicians and voters to make decisions about issues like these without relying on ad hominem judgments of institutions, researchers, and advocates—and this limitation applies to both doubters and believers. The two sides drew opposite conclusions because they differed in their views of who was a friend and who an enemy, who was reliable and who unreliable. Neither side was keen to acknowledge that their positions were derived from ad hominem judgment rather than direct knowledge, because such an admission would have weakened their credibility in public debates where the appearance of scientificity was an advantage. So they ended up talking past each other, trading graphs and equations when only open discussion of character would have gotten to the heart of their disagreement.

When we're unable to carry out a direct verification, whether because we can't see our opponents' cards in a game of poker or because we don't have years to learn the necessary scientific background in a game of climate models, we determine the validity of information by judging its providers, sometimes on a frankly partisan basis. “What's their track record?” “Do they have a motive to deceive us?” “Do they agree with us about other issues?” “Are they our kind of people?” “Who, whom?” etc. But to bolster our case and our ego many of us pretend, both to ourselves and others, that we've drawn our conclusion on the basis of direct knowledge to which we've in fact no access. Unfortunately, the pretense that we are or even can be above ad hominems bars the way to reflective self-criticism—and thus decreases rather than increases the accuracy of our beliefs.

The climate change debate shows how partisan load and signaling load can overlap and amplify each other, creating a cascade effect still more intense than that described in our bag ban example. The amplification occurs because beliefs about climate change are affected not only by partisanship and by signaling, but by the signaling of partisanship. I'll narrate this cascade in the abstract and leave you the joy of applying it to the particulars of the case.

- Prominent members of Trust Network A endorse Position X, while prominent members of Trust Network B denounce Position X.

- The rest of Trust Network A endorses Position X because they trust their prominent members and distrust their enemies' prominent members; the rest of Trust Network B denounces Position X for the same reasons.

- Position X becomes ubiquitous in Trust Network A, while Position not-X becomes ubiquitous in Trust Network B.

- Because of this ubiquity, Position X can serve as a signal of allegiance to Trust Network A and Position not-X a signal of allegiance to Trust Network B, each increasing status and trustworthiness within those respective networks and decreasing it in the opposing network.

- This further incentivizes members of Trust Network A to loudly endorse Position X and members of Trust Network B to loudly denounce Position X.

- Because even more members of Trust Network A now endorse Position X even more loudly it seems even more true to members of that trust network and becomes an even more widely recognized signal of allegiance. And the same happens for Position not-X in Trust Network B.

- Open dissent is strongly disincentivized because it causes one to appear disloyal and thus lose status within one's trust network.

- Now both trust networks are locked into opposing beliefs and hold them with much greater confidence than was justified by the initial information. Even if further evidence eventually proves one side right, the other will cling to its mistaken beliefs for an unreasonably long time, because whoever breaks rank first will seem traitorous and suffer a loss of status.

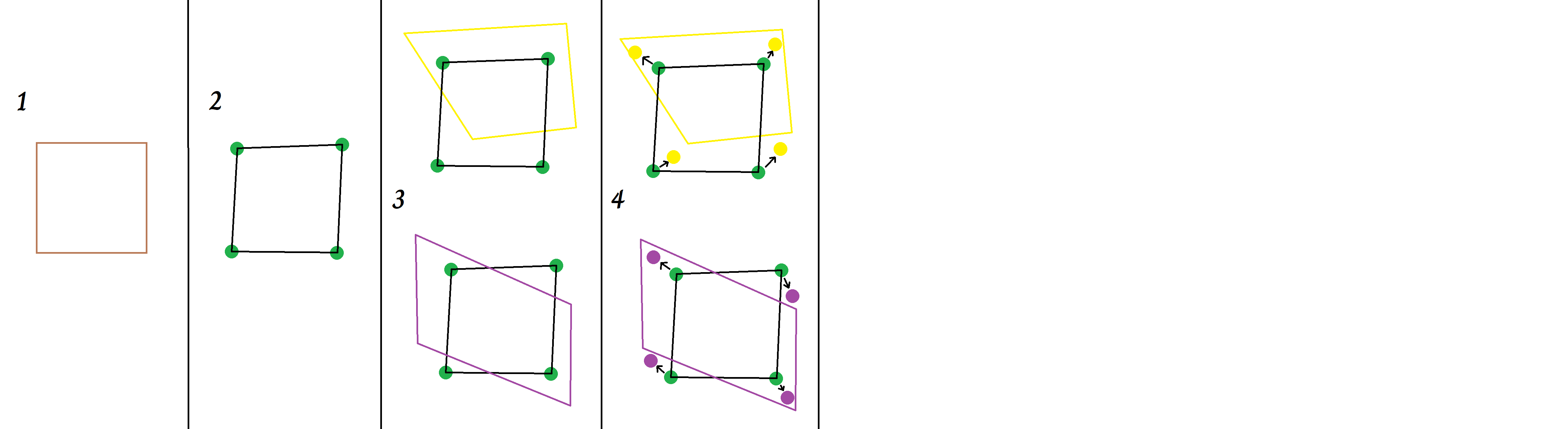

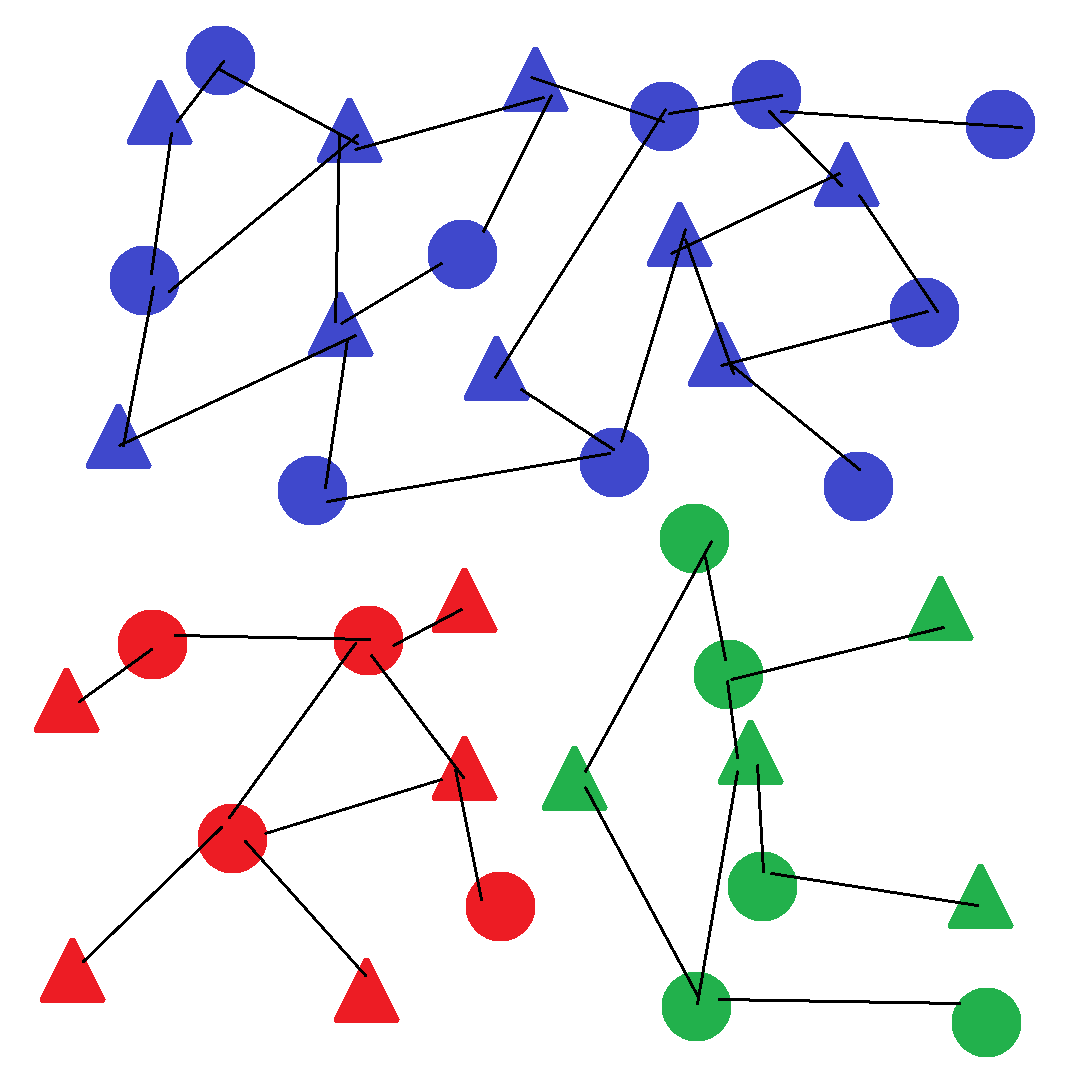

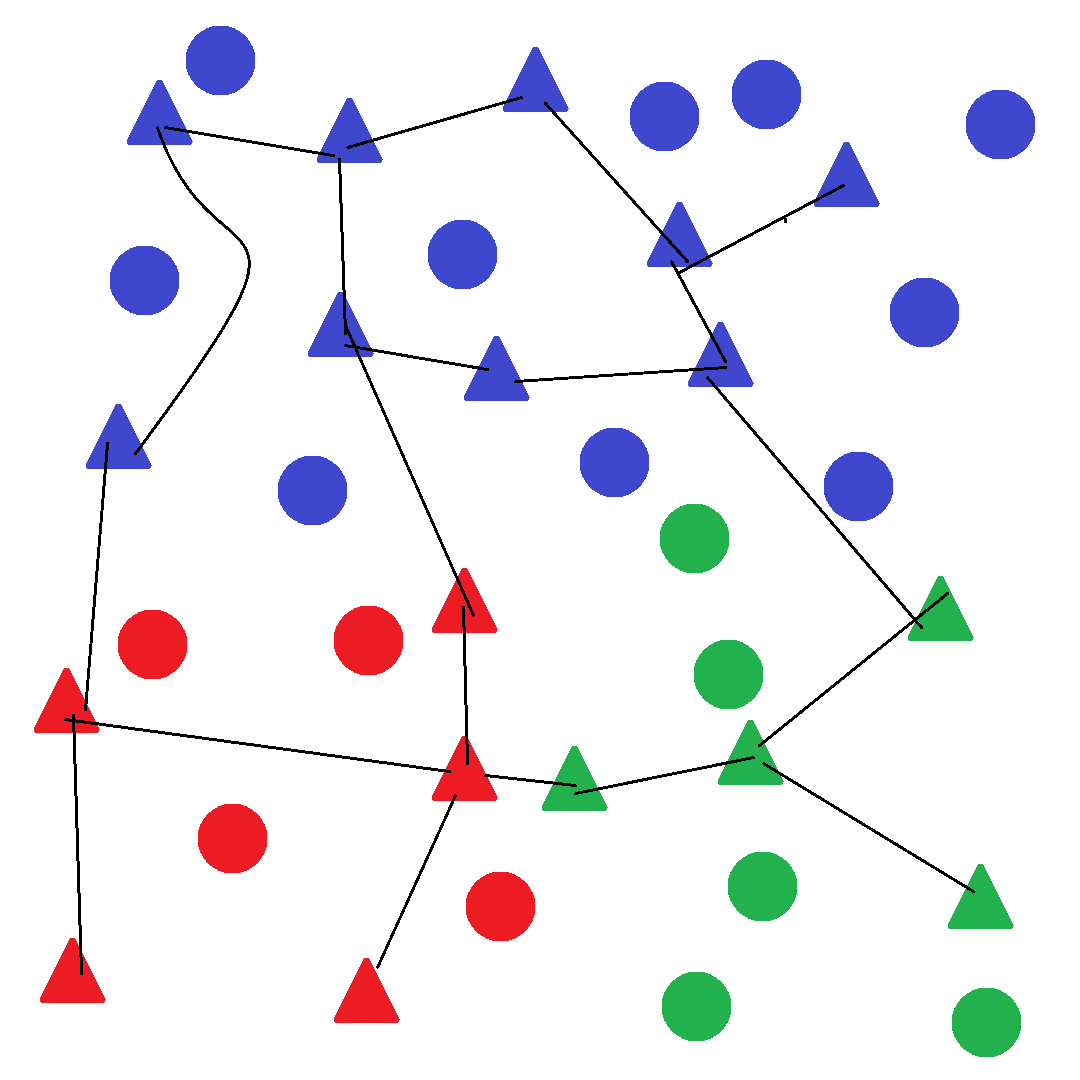

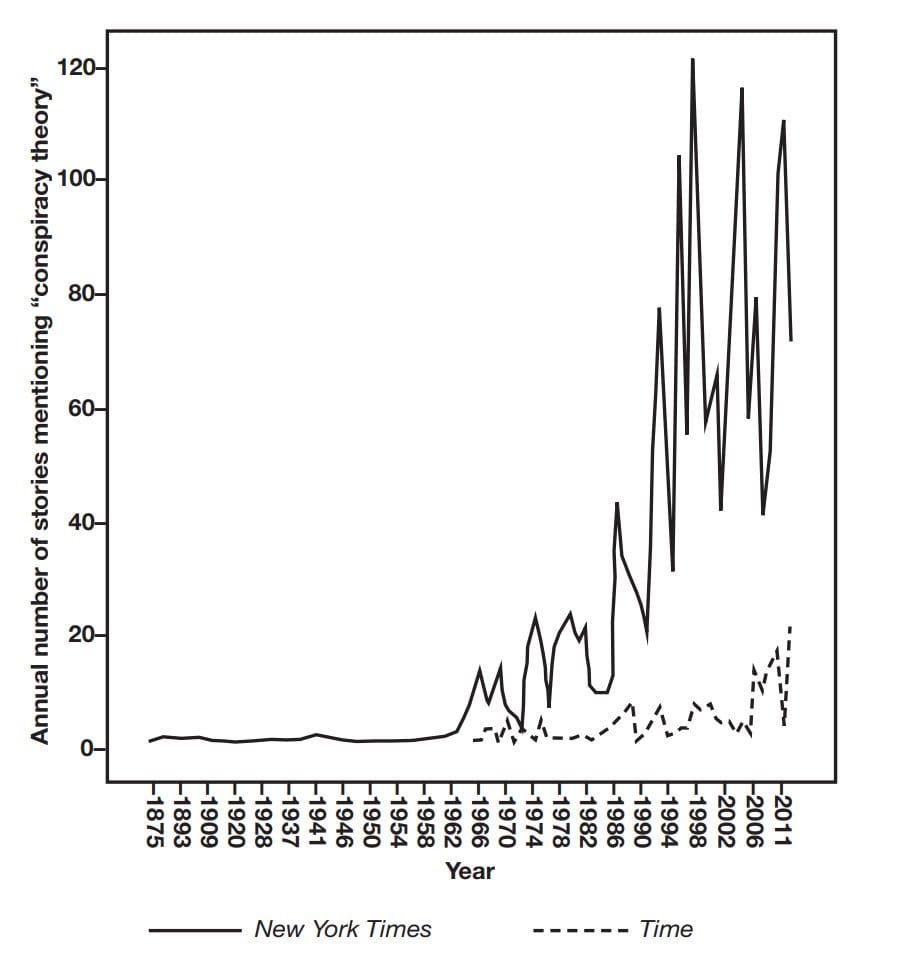

As a final summary, here's a geometrical rendering of how partisan load and signaling load work together to degrade knowledge and increase the divergence of trust networks.

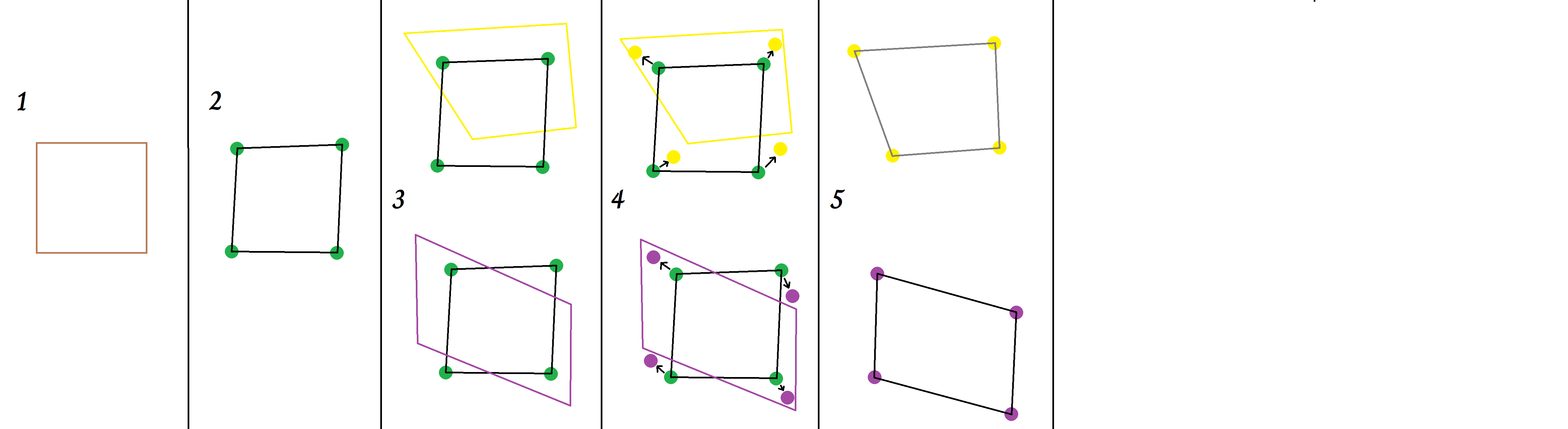

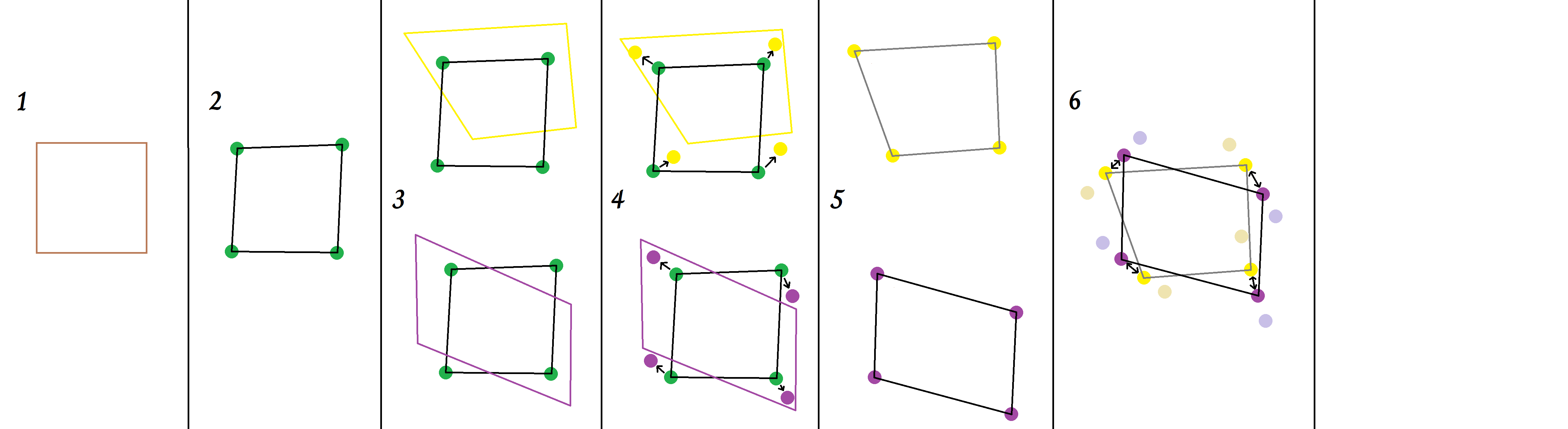

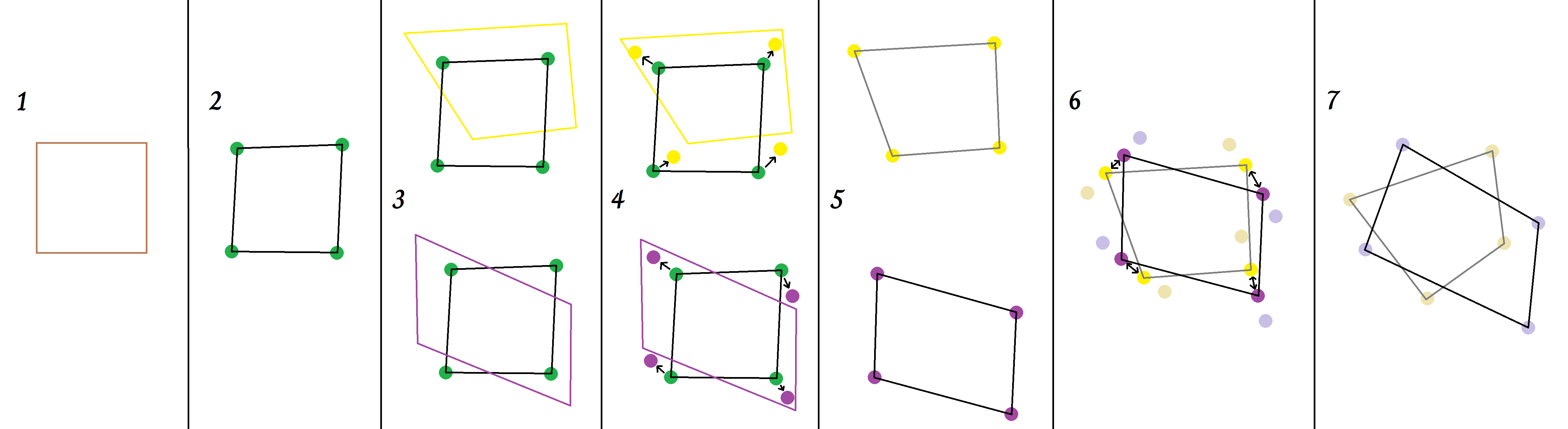

The brown square in Panel 1 represents a real object whose shape is not immediately obvious to observers. Suppose that in the absence of any epistemic load, we would estimate the location of its vertices at the green dots in Panel 2. These beliefs generate an imperfect but recognizable approximation (the black polygon) of the real object (the brown square).

The yellow and purple polygons in Panel 3 represent the shapes one would publicly describe to maximize personal benefit from social signaling in two different trust networks. Note that these optimal signaling shapes are not the same for the two networks; nor do they match the real object. We can think of the vertices of both the original brown square and the two colored polygons as exerting an attractive force on beliefs (the green dots) that increases with distance. This is shown in Panel 4. The attractive force exerted by the two colored polygons is signaling load, and its magnitude varies depending on the circumstances.

When this signaling load shifts beliefs toward the colored polygons the new equilibrium beliefs end up in different locations and describe different shapes (Panel 5).

Now, suppose the two trust networks are antagonistic. Their beliefs will exert an additional repulsive force on each other, pushing them toward the pastel gold and lavender dots as shown in Panel 6. This repulsive force is partisan load, and once again its magnitude varies depending on the circumstances.

Panel 7 shows the final shapes described by the collective beliefs of two antagonistic trust networks. They now bear little resemblance to the real object seen in panel 1.

Epistemic load degrades the quality of knowledge right across society and in all of the conflicting trust networks, even if one is less bad than the other. This last point is critical, but usually missed by partisans. Society becomes like a ship trying to navigate the reef with a distorted map: unless providence intervenes, it's sure to run aground. And all this is before we consider the impact of foul play. A signaling regime with potent effects on mass belief is practically begging political actors, both state and non-state, to manipulate it for their own nefarious purposes, causing further degradation. I'll illustrate in the next section.

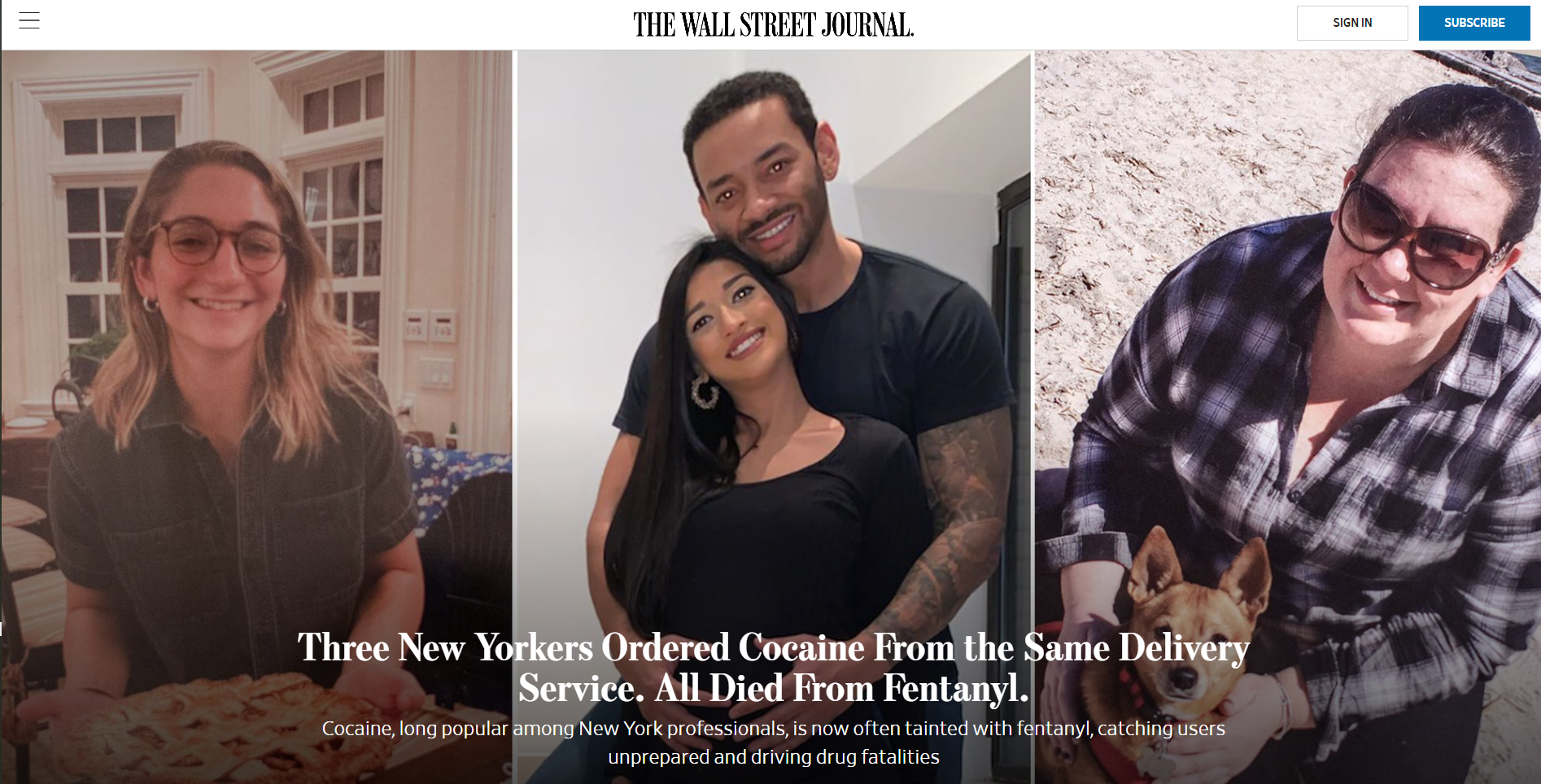

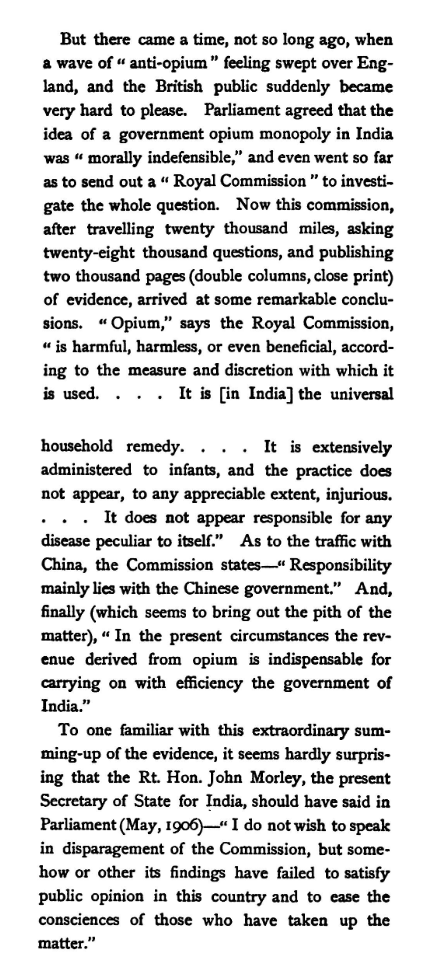

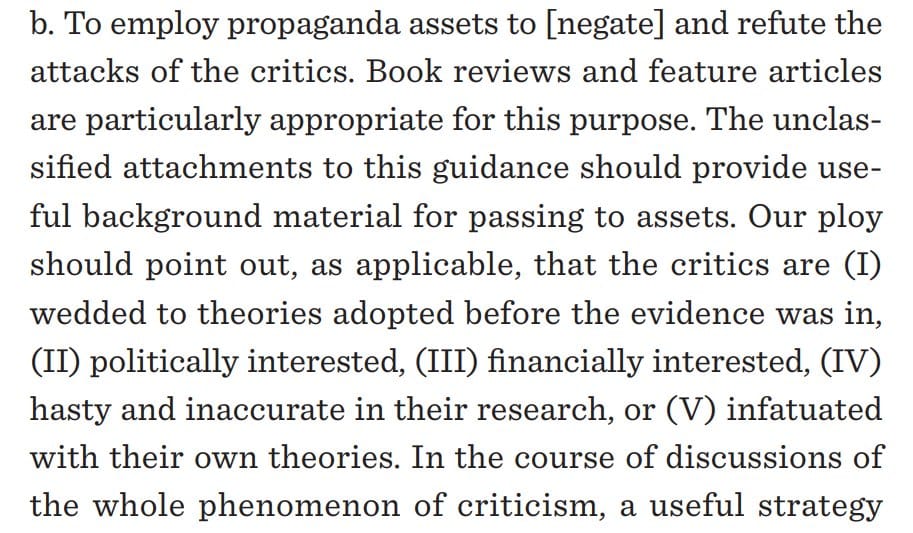

E. Let's Deal Drugs (Hacking Trust Networks)